This blog is the second of a set of ideas used as material for webinars delivered with Century Learning where I set out some key ideas about assessment that might be useful to support teacher CPD. Here is the first post:

Understanding Assessment: 20 CPD slides.

I’ve delivered multiple presentations and webinars about assessment for schools as training and, more recently, as part of a joint initiative with Century Learning. I’ve used these slides on multiple occasions – so I thought I’d share them here with brief explanatory notes. Purpose. Assessment serves different purposes. Some assessments – mostly the formative kind…

And here is Part Two.

(Slide deck at the bottom)

Assessment for student agency.

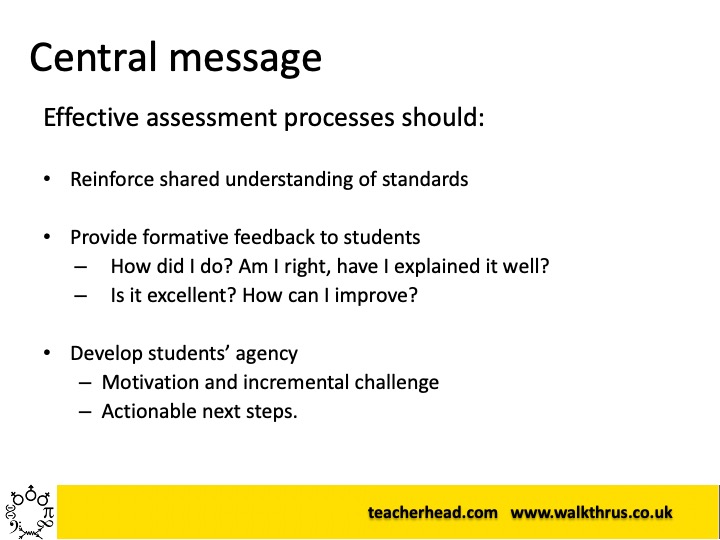

The main thrust of this presentation is that assessment needs to support students to develop an understanding of their own progress; their capabilities; their knowledge and knowledge gaps – motivating them to take actionable steps to go further in the learning process. It’s not primarily about providing information to the teacher or the system (although this has a role) – ideally assessment should focus on the students’ knowledge of themselves.

Data in perspective: students should know themselves.

We really must keep centralised data collection in perspective. Of course some data is useful – but, in the main, if students have a strong understanding of where they are along a learning path, then that’s the most important thing. The data may support the process from a distance, but it’s never the main thing; the main purpose.

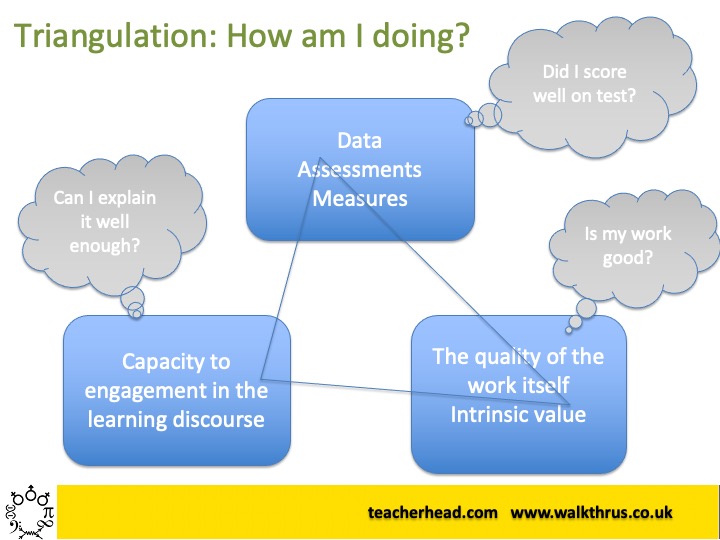

Triangulation

Students understand their sense of ‘how am I doing’ much the same way that teachers do: by triangulating various sets of information: Their sense of their understanding – eg how well they can explain things in discussions; the quality of the work they produce – against some sense of the range of relevant standards – and their performance in assessments, their scores and their sense of whether these are good scores.

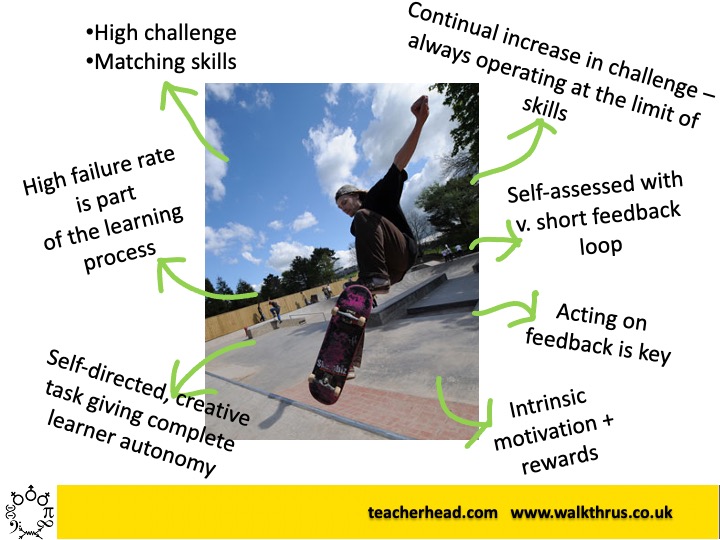

Self-directed learning: feedback loops.

Skateboarding at the skate park offers an example and metaphor for self-direct learning where high challenge, high motivation and autonomy and linked to short self-generated feedback loops. Boarders know when they’ve done well; they know what standards mean; they’re motivated to improve continually. It’s intrinsically rewarding. Learning goals are constantly adjusted to provide incremental challenge.

Reality Check: Instructional teaching is vital.

In truth, most kids don’t go down to the skatepark; they’d need support and guidance to get to the point where they could be the self-directed learners of our dreamy idealised scenario. If we want more children to thrive in that self-directed, autonomous independent learning mode, then we need to strengthen the instructional teaching that builds secure foundations of confidence, knowledge and skill. But, of course, we do also then reduce guidance and feedback and ensure students start to generate it for themselves. We need to let go – otherwise they will forever rely on external feedback.

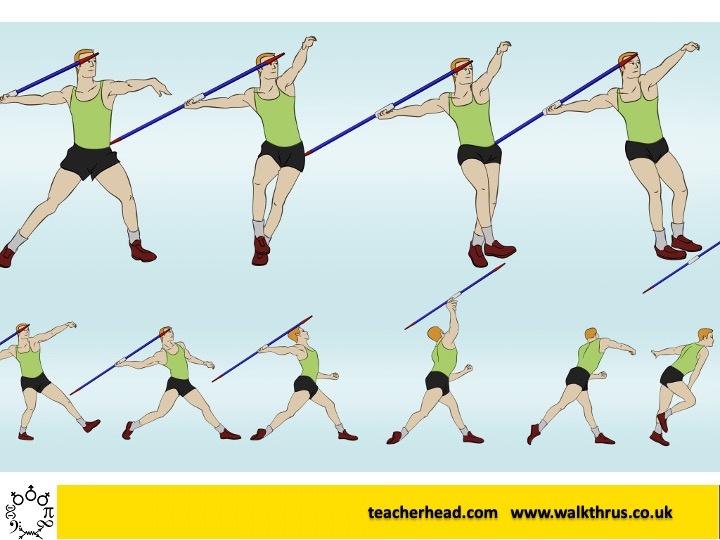

Actionable feedback: Measures and strategies.

If Jason the javelin-thrower is going to improve, throwing further, he needs to know how far he is throwing and what the range of strategies is that might support him to throw further – so he knows what to apply effort to in order to improve and whether he is succeeding. If he has no idea how far the javelin goes, he can’t adjust his effort or strategy selection.

Briceño’s Learning and Performance Zones.

Students need plenty of time in the ‘learning zone’. This where they focus on specifics, safe low-stakes rehearsal; mistakes inform the learning. Nobody is looking.

But students must also learn to step it up in order to perform. Performing well when it matters is part of life – here we deliver at our best; we optimise performance and minimise error. However, we don’t get better in the Performance Zone unless most of our time is spent in the Learning Zone. Both matter.

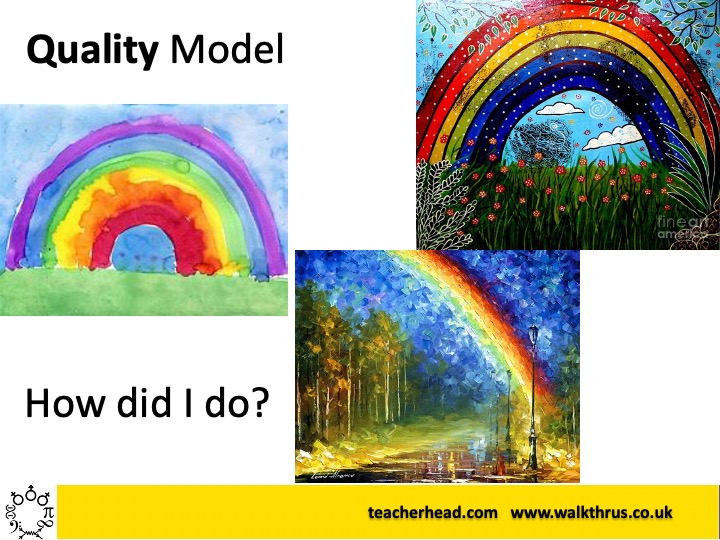

Quality Model assessment needs exemplification

Very often standards are hard to define through language alone – eg with criteria statements. It’s often essential to show what standards mean by comparing examples. Students can develop an internal schema for standards by examining contrasting exemplars and lining their own work alongside them to determine the extent of their success. They can then self-regulate as they produce work, aiming for a standard derived from this process; they don’t just ‘hit and hope’.

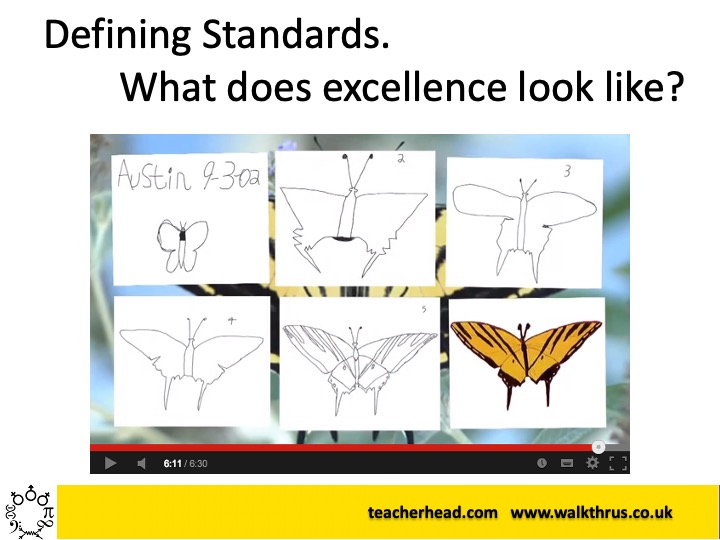

Engaging Students in Defining Standards.

As Ron Berger illustrates in the classic Austin’s Butterfly video, involving students in processes such as peer critique, supports them to develop a sense of standards and progression pathways: ie knowing what excellence might look like and what steps we might take to reach it.

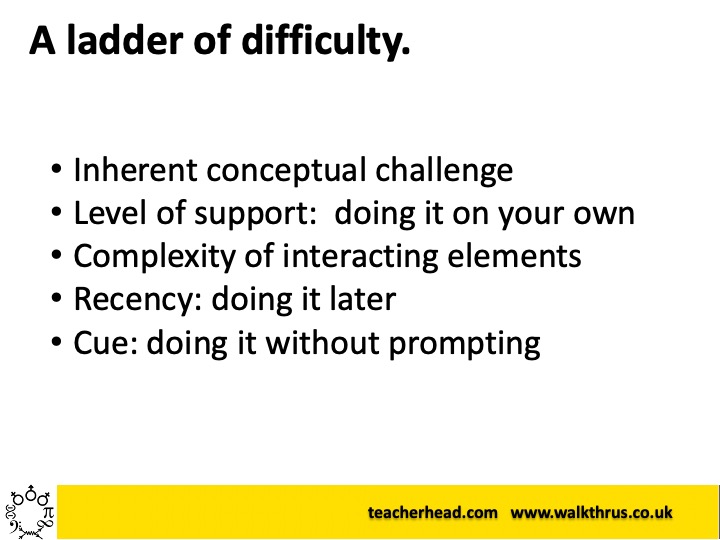

Incremental Challenge: A ladder of difficulty

If students are going to emulate the idealised self-directed skateboarders, they need a sense of progression steps that constitute ever-increasing challenge. This can be tackling ‘harder questions’ but also can involve getting less help, mixing more concepts together, returning to material after time has passed since it was taught or removing ‘cue’ – those prompts the indicate which knowledge is required for any given task.

What do I need to do here?

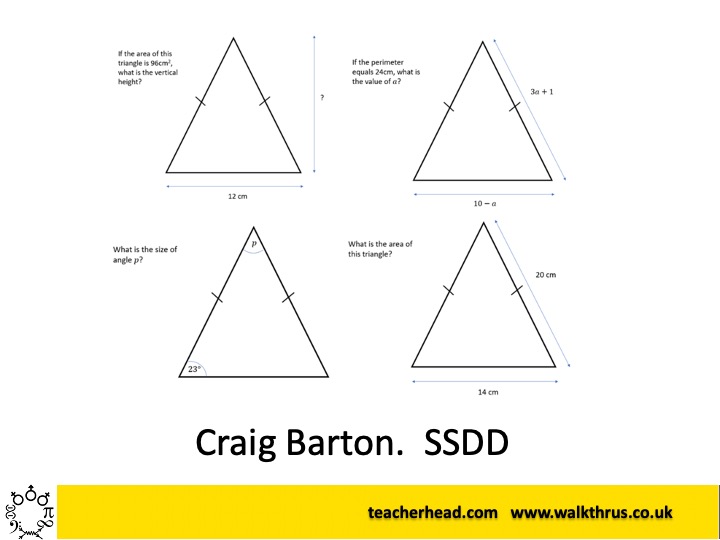

A strong example of developing students’ independent thinking, with ‘cue’ removed, is the use of Same Surface, Different Deep structure maths problems – as in Craig Barton’s superb site. Students learn the habit of approaching problems with the same initial enquiry: ‘what do I need to do?’ They learn not to panic but to explore their knowledge over a range of topics and concepts to find contender strategies to solve the problem. This approach can be developed in other subjects.

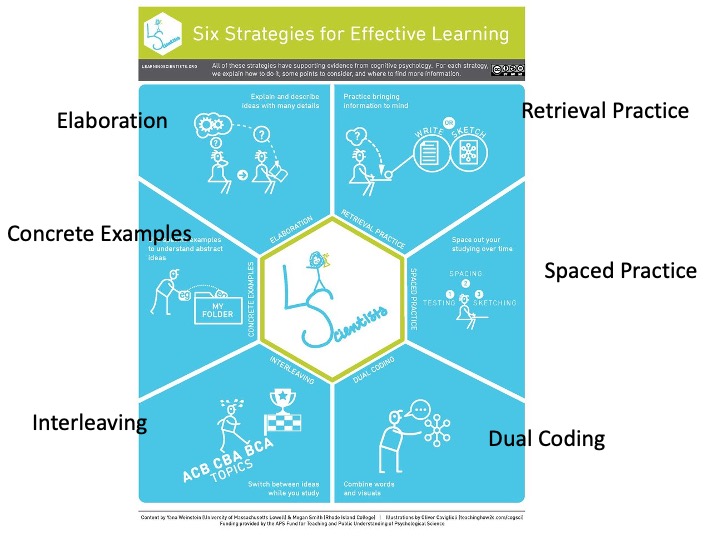

@AceThatTest: The Learning Scientists’ Six.

It’s important to remember that the strategies promoted by the awesome Learning Scientists are designed for students to use themselves: they are study strategies, not teaching strategies. That said, it’s important to model each of them explicitly so students know how to use them. However, students can develop their agency around effective studying, using retrieval practice and space practice, for example.

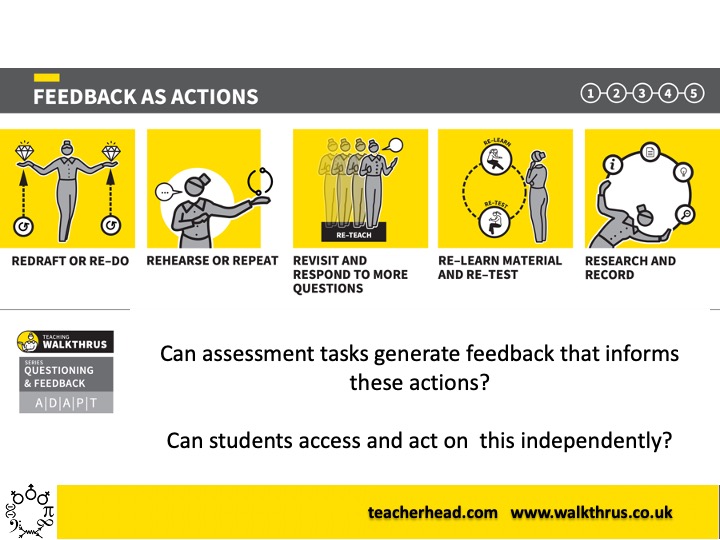

Feedback must suggest actions.

In line with the idea that teacher feedback should suggest actions students can take, if students are generating their own feedback based on assessment information, then the actions they need to take must still be part of the process. This isn’t always easy but can be built into routines. If you find out X about your work.. then do Y. Eg routines around practising, redrafting, re-reading, re-studying and re-testing – at the right point.

Don’t Do Can Do.

This is just a dead end piece of assessment thinking as it encourages students to be over-confident and self-limiting. Job done!

However it can be turned around. Instead of ticking off a list saying ‘I can explain ….’ – make it a prompt for a check: Explain why your heart beats faster during exercise. This invites a response of unspecified depth, each time it is encountered. It prompts students to explore and deepen their knowledge, not tick it off and move on. I think it is much more than a semantic change – it affects how students view themselves and their knowledge.

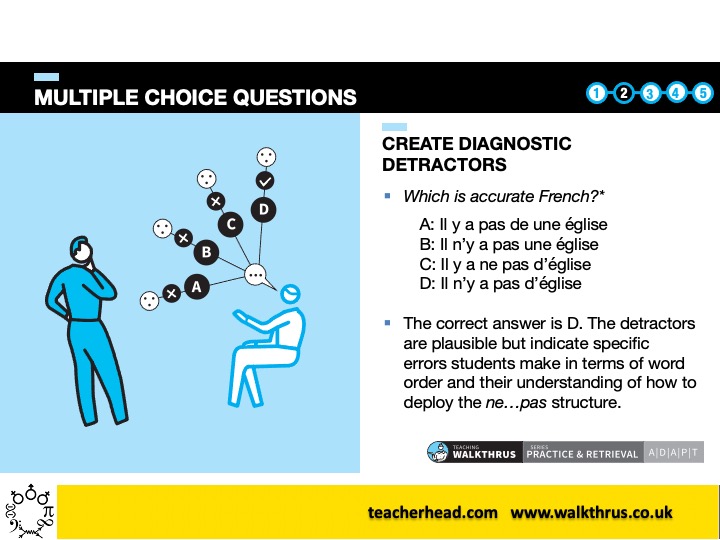

Multiple Choice Diagnostics.

Well designed MCQs can be used by students as well as teachers as a diagnostic tool. If they test themselves and select a certain wrong answer – it indicates the possible source of their confusion or lack of knowledge, helping to direct them to the solution for plugging that gap. They are quick to administer and can be used repeatedly, with time passing and subtle changes, to see if students are improving.

Practise Explaining. Schema building and fluency.

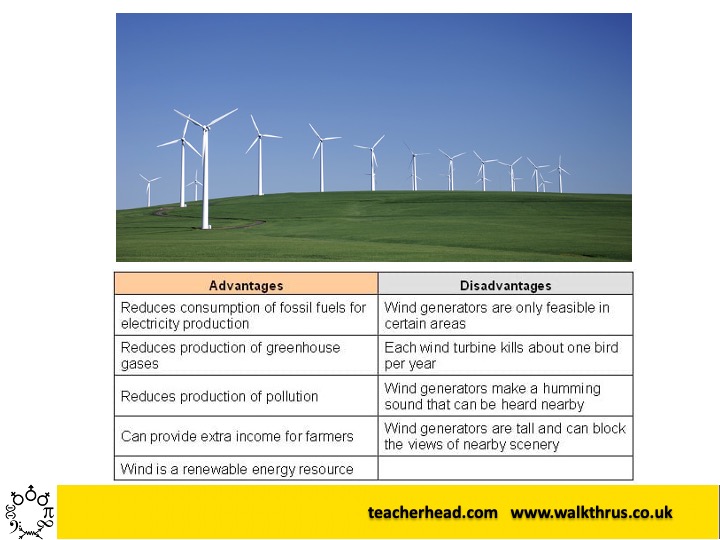

An important but under-used mode of formative assessment is supporting students to incrementally improve the depth of their explanations. eg ‘Explain why we have wind farms.” Looking at the image alone, as prompt, we could all produce some kind of explanation. But, then after reviewing a prompt – such as the table shown, we might try again, attempting to improve our response: the range of factors, the subtle weighing up of competing factors, the sequence and depth of our explanation. With practice and short feedback loops, students can get better at explaining things.

Doing things and knowing things.

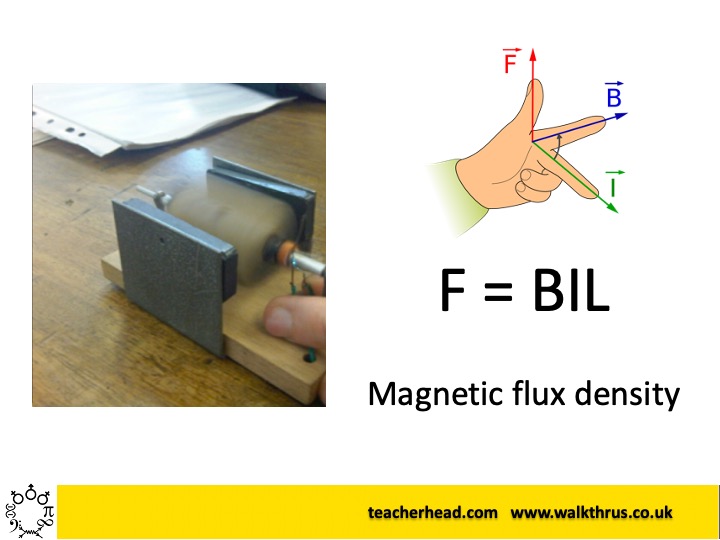

Of course, there are multiple goals in education. It might be that building a working motor is the goal – this has various practical, motor-skill elements that do not require a full theoretical understanding, yet lead to experiential tacit knowledge with real value. Similarly, applying a mathematical equation to a scenario is important in physics but requires no actual practical know-how. So, we need to ensure a range of learning goals are embraced and connected in our curriculum and weight their value in assessment terms. In this case there is value in both elements and they can become mutually reinforcing at a deep schema level.

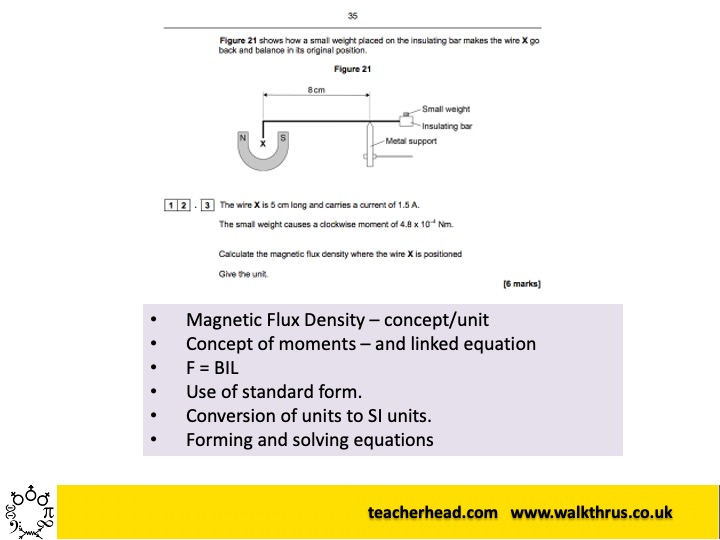

Identify Knowledge and Practice Components.

In learning to tackle complex problems with confidence – such as an ‘end of paper’ physics six-marker – it is helpful to be able to break them down into elements that can each be addressed in isolation and practised to fluency: explaining concepts, using terminology, applying spacial models, selecting mathematical operations, identifying common problem types and strategies. If this process is made explicit as part of the teaching process, students can develop the confidence to approach these problems themselves without giving up at the first hurdle – ie that the answer is not immediately apparent.

The value of instructional inputs.

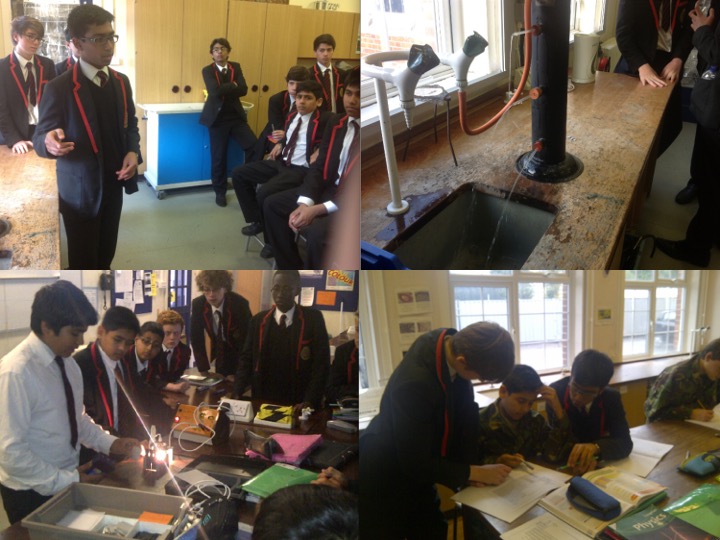

One special type of activity – often under-utilised – is for students to build the capacity to explain ideas to others via instructional inputs. Here, where Trevor is explaining the pressure can and Anwar is explaining the ray box experiment, they are practising explaining; developing a sense of their own understanding in doing so. In order to prepare for this moment of performance, they will have had to rehearse and refine their line of reasoning and powers of recall. This is a form of assessment – and, over time, giving all students these opportunities, adds depth to their curriculum experience and their sense of themselves as students.

Thanks for this informative post. It is a wonderful and comprehensive compilation of the Why, What, and How of student assessment. This should be a checklist.

Best Regards,

LikeLike