I’ve delivered multiple presentations and webinars about assessment for schools as training and, more recently, as part of a joint initiative with Century Learning. I’ve used these slides on multiple occasions – so I thought I’d share them here with brief explanatory notes.

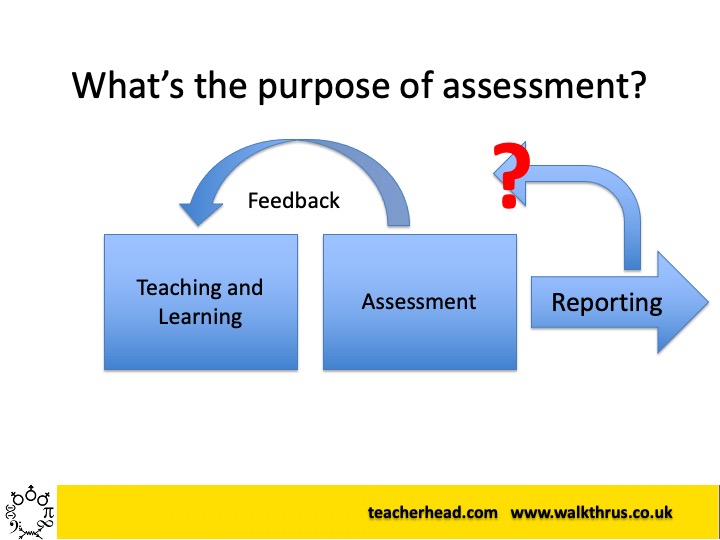

Purpose.

Assessment serves different purposes. Some assessments – mostly the formative kind – provide feedback to students and teachers informing the teaching and learning process; it’s the most important. Some assessment modes can serve to report progress to parents and various stakeholders.. but it’s much much less likely to have an impact on future learning. So we must focus assessment on doing the most important thing – not morph it into a tool designed primarily around reporting.

Schema-building

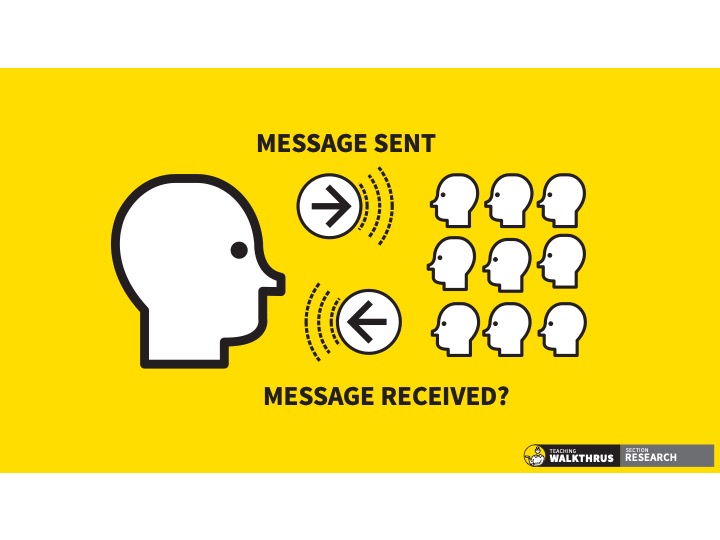

A component of learning, as students build their schema for any given knowledge domain, is a metacognitive process that drives motivation and intentionality: a knowledge of self – what do I know? What do I need to know/do/focus my attention and effort on in order to achieve goals? Assessment needs to provide feedback to students as they engage in this process – so it has to be meaningful in terms they understand, highlighting actions they can take.

Feedback is for teachers too.

As well as students knowing themselves, teachers need information about students’ learning that can help direct and redirect their instructional inputs and curriculum design. Again, the form this takes has to have maximum meaning to them – in their curriculum area.

Variation

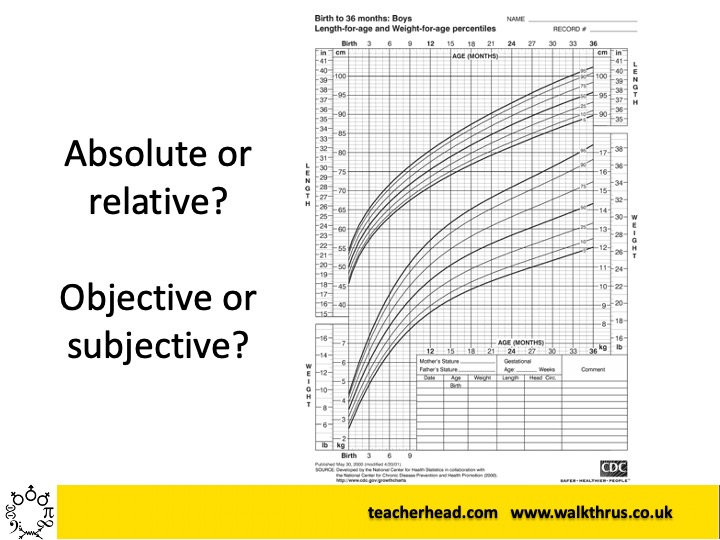

It’s an inherent feature of any group of people that their characteristics vary – and this includes their rate of progress or depth of learning in any given process. Every group is a mixed-attainment group. Comparative performance in learning informs our sense of standards but the way we give meaning to performance across the range is subjective, driven by various, often competing, values: our desire to reward excellence and to want everyone to succeed.

Even absolute measures become relative

Nobody tells you their baby is 65cm long. Why? Because at 3 mths this is 95th percentile (very big) and 7 mths, this is 5th percentile (very small). The absolute measure is only meaningful in comparison to the cohort. There is nothing intrinsically good or bad about being big or small but some form of comparison is implicit in how we generally understand human measures of many kinds. This applies to nearly all educational assessment measures too: we only know how to gauge our response -ideas of success, achievement, standards – against a backdrop of the range of performance in a cohort.

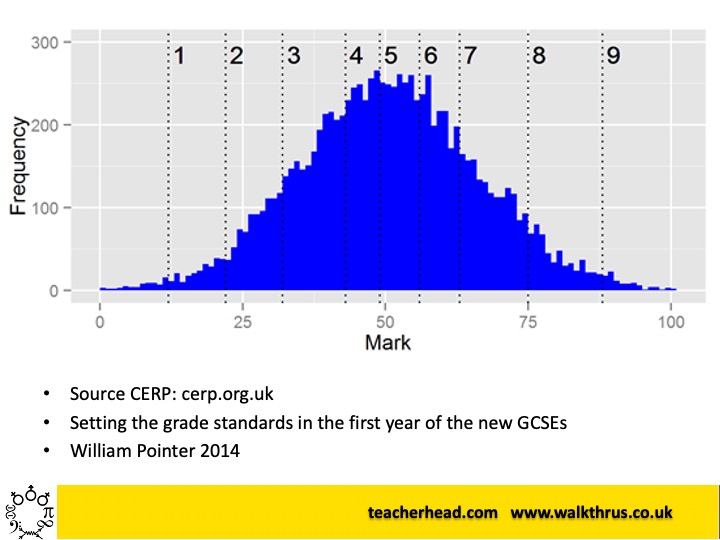

The Bell Curve and Grades

Scores of several thousand students taking the same maths test, scaled so the range of scores is 0-100 form a classic bell-curve: not imposed on the data; it’s an outcome of cohort performance, not dissimilar to height variation. The grades however are entirely arbitrary: the number of grades and where they are placed have no fundamental basis; we invent them as a proxy for ideas about standards spanning the range of performance. Pass/fail thresholds are similarly arbitrary – determined by policy makers; accepted conceptually by stakeholders engaged in the system. Boundaries might shift when different tests are set and then compared, in order to maintain validity in asserting that standards are common between them.

Crucially, 20% of children will always be in the bottom 20% (yup!) and so we need to find ways to give their learning value, motivating them to engage in the process of learning more – outside the framework of comparison with the cohort.

Heath Robinson!

Many data collection systems in schools are the equivalent of this Professor Branestawm potato peeler -way too complex for the job they do. Teachers already know most of the issues their students face – and the data machinery often doesn’t help them.

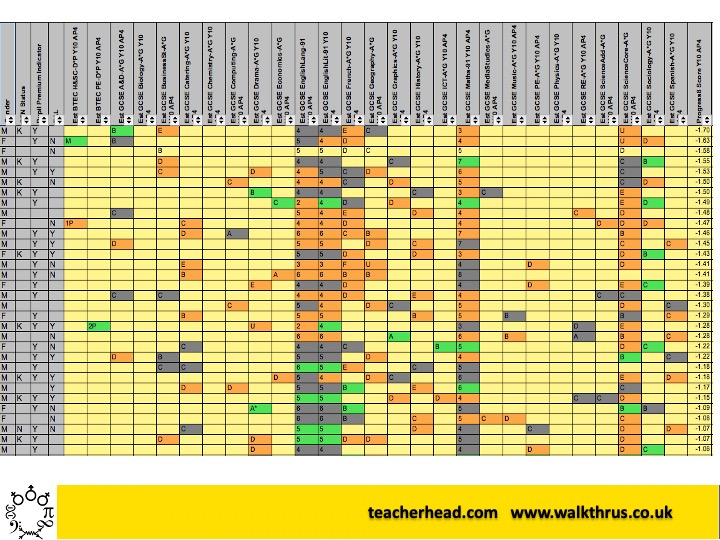

Data Delusions

Very often we make assumptions about data validity that isn’t there eg that a 6 in Geography has parity with a 6 in History – when no mechanisms exist to moderate the assessments. The flagging systems rarely tell teachers new information and, relative to the time taken to establish these data sets, the impact on learning for individual students is very low. None of these data points communicates anything actionable in curriculum terms – because the original assessment information has been distorted into proxies that don’t carry enough meaning.

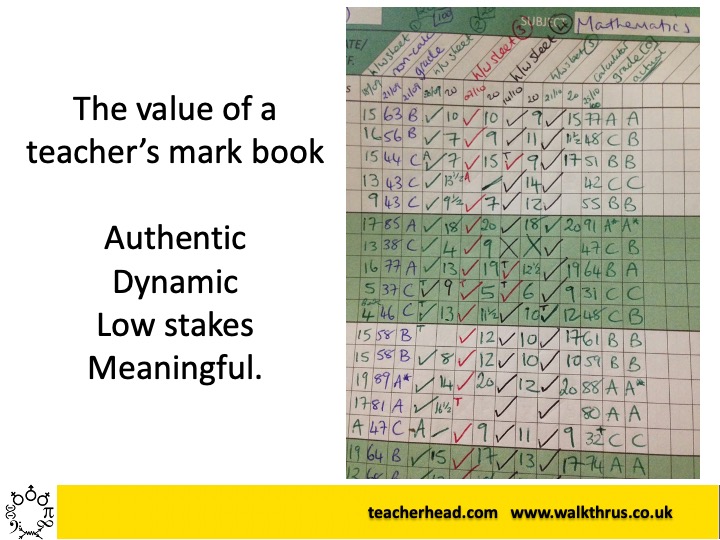

Authentic Data

This teacher markbook contains authentic assessment data: information that makes sense to the teacher in terms of specific aspects of the curriculum and student learning: some grades some ‘complete/not complete’ checks, some scores on varying scales – meaningful in terms of the specific assessments they relate to. If all this is morphed into an externally imposed standardised scale, the authenticity and meaning are massively diminished.

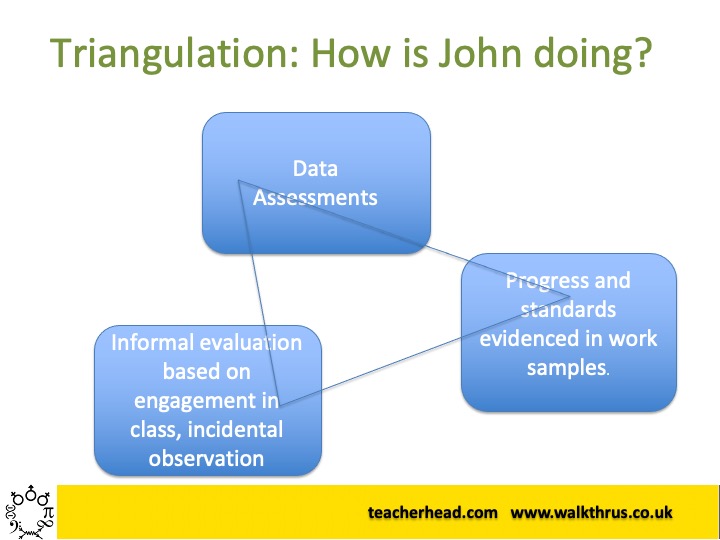

Triangulation

A more complete understanding of a student’s learning and progress is formed by triangulating between data, samples of work (the organic, qualitative holistic sense of standards) and interactions in class dialogues. Sometimes these elements contradict each other.. but it’s a truer sense of how we evaluate what a student knows and what they need to do to learn more.

Meaningful Measures and Goals

We must be clear to set ambitious goals for all (we’re all aiming for the top of the mountain) but to reach the top, at varying rates of progress, we need to understand the pragmatism and motivational value of aiming for short term success in reaching achievable next steps along the path. We need assessment information to help both teachers and students to work out where they are on the path and what their next steps are – in terms of the content of the curriculum content. This is fine-grained information about knowledge and skills.

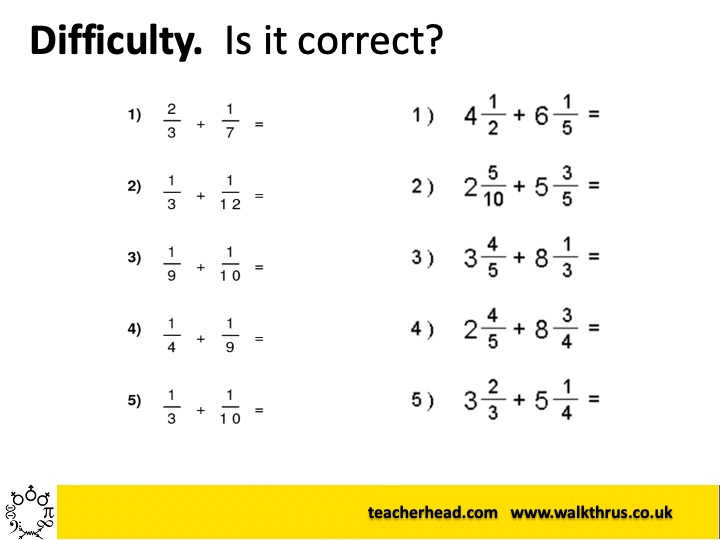

Difficulty

Our ideas about difficulty are located in the specifics of the questions students can answer; the problems they can solve. Often there’s a logical ladder of difficulty through a curriculum; sometimes it’s empirical. Student’s sense of success and progress is located in getting questions right. Progress means moving onto more difficult questions up the ladder.

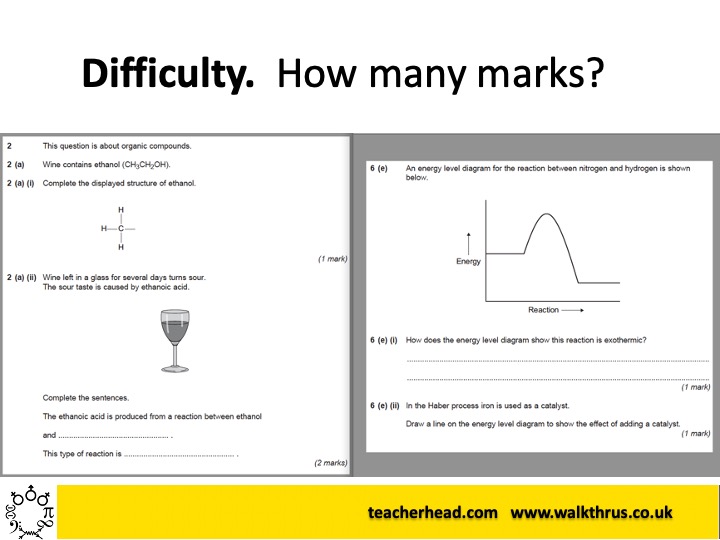

Marks and tests

Allocating marks to questions is arbitrary; a pragmatic proxy, assigning degrees of success, difficulty and relative significance in a domain of knowledge that teachers establish from experience of what students should be able to do. A test typically comprises multiple elements that aggregate numerous proxies of this kind and the language of scores is well understood – provided we know what is covered on the test. We can extrapolate a sense of ‘standards’ from test scores if lots of students take them in the same conditions. However, even a one-off test with one group has meaning on its own terms – we learn about what students know.

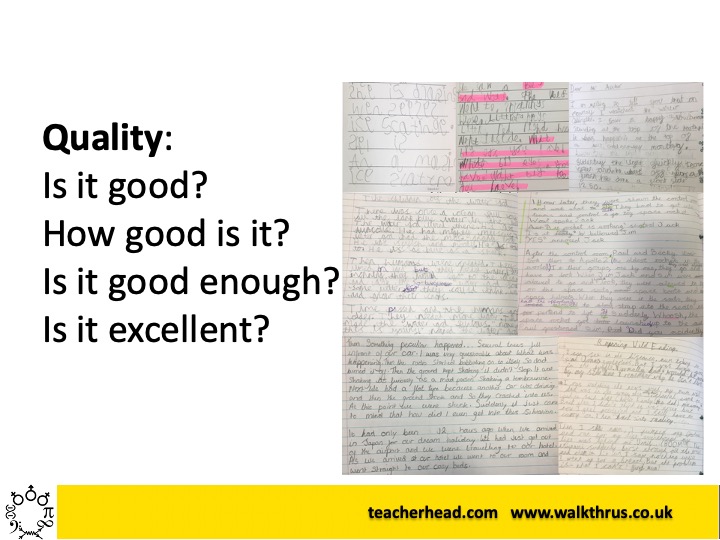

Quality

There is no correct mark for an essay or piece of writing – it’s a matter of judgement. Whilst marks and grades might give a broad sense of standards as a loose proxy, students and teachers understand the actual quality of a piece of work in the detail by matching its features to the features of other exemplars . There’s a complex combination of overall quality with a sense of detailed criteria being met; repeated engagement in the evaluation process produces a schema for what ‘success’ looks like in the context of specific tasks.

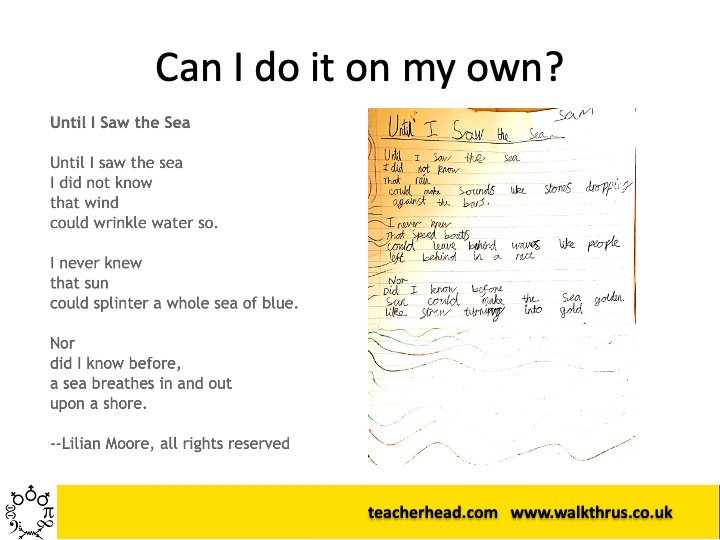

Independence

This poem was one my son wrote in Year 2. It’s brilliant! But –heavily scaffolded. He’s achieved a high standard of writing with lots of support – but learning a lot in the process. The next step on the journey is to apply the learning independently or with less scaffolding. Progress can be gauged by succeeding at the same task with less and less help.

Knowledge progression

Within a typical topic area -there’s a set of knowledge students typically need to gain for us and them to say ‘they know it’. However this is not an absolute measure; the is no actual limit and no real sense to ‘I know X’ or ‘I can do Y’ when there is nearly always ever more to know; ever greater depth to explore and explain. Progress manifests itself as knowing more, explaining in greater depth or with greater fluency. Again we need to compare examples to gain a sense of what level of depth constitutes success or excellence in a given context, in response to specific questions and challenges. However, whatever grade any student is given or score they gain on a test, there is always more they can know.. and that knowledge is definable and meaningful in ways that cannot be conveyed by grades and score themselves.

Set the standards

Establishing standards (What does excellence look like? ) is an important part of a learning process. This takes form differently in difficulty model and quality model assessments.

Engaging students in understanding ideas about success and excellence helps them to self-check as part of their ongoing self-regulation as they undertake the learning. Ultimately they are the people who need to know – not the teacher. Is it right? It is good enough? Is it excellent? Have I improved?

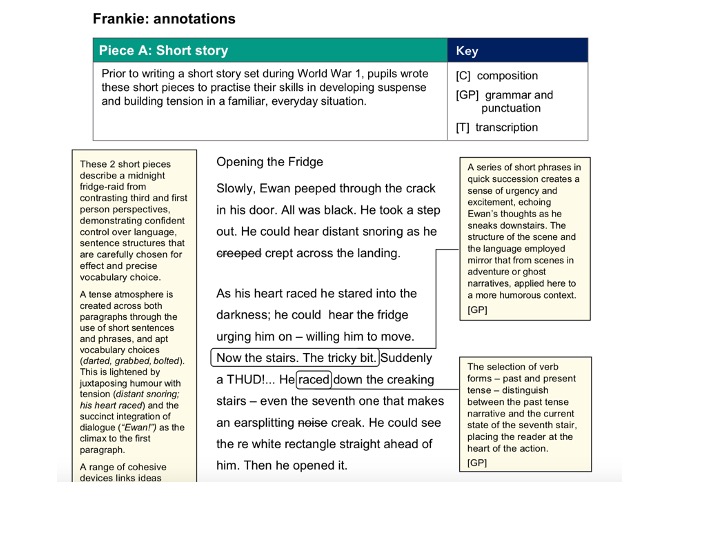

Exemplars

In multiple areas, exemplars serve as a powerful reference point for developing a shared understanding of what standards mean – what is mediocre; what is excellent. This can be hard to achieve through rubrics and success criteria or descriptors. Showing multiple contrasting examples brings the criteria off the page, allowing students to compare their work and see for themselves where they might improve.

Moderating Standards

As with the KS2 moderation materials for writing, it is essential for there to be a process that allows teachers to share ideas about standards, linking impressionistic, holistic ideas of quality to detailed identifiable elements that can be taught – so students can see how to improve. In the absence of moderating exemplars and a process for comparing different samples teachers can’t know that their sense of the standards is the same as anyone else’s – and that then feeds into their students’ understanding.

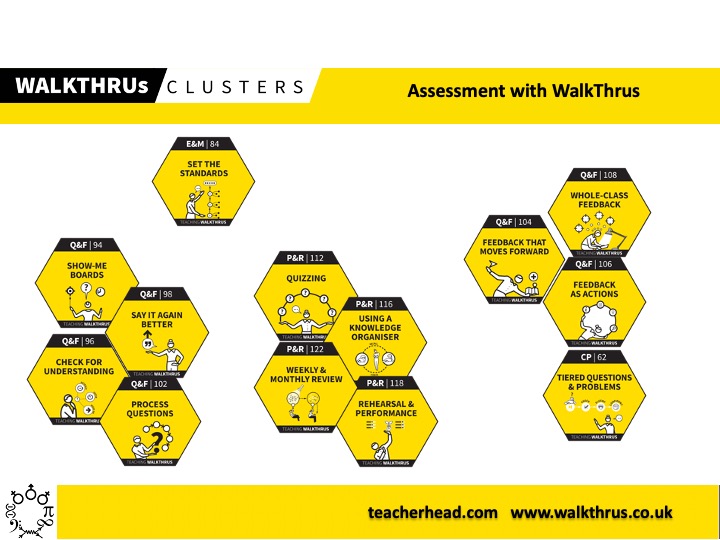

Formative Assessment Tools.

Assessment obviously embraces the formative assessment that happens minute by minute during lessons through questioning, low-stakes retrieval tests and performances and dynamic, responsive feedback. The repertoire of techniques teachers can deploy is extensive. Doing these things well day in day out, matters a lot more than submitting ‘accurate data’ into the central database a few times a year.

And here’s Part Two….. focusing on student agency

Understanding Assessment Part Two: Building student agency.

This blog is the second of a set of ideas used as material for webinars delivered with Century Learning where I set out some key ideas about assessment that might be useful to support teacher CPD. Here is the first post: And here is Part Two. (Slide deck at the bottom) Assessment for student agency.…

I really like the concepts presented in your slides, how you combine different forms of evidence, and how these fit with different purposes… My one suggestion (please don’t be offended) is for your slide with working memory: I’d suggest making the circle (representing working memory) much smaller – to better represent its limited capacity. By extension, for the “network’ (representing LTM) – I’d make that much bigger – even bigger than the brain – like a huge tree with branches that go way beyond the person’s head… For that would make the concrete visual more representative of that abstract text we all “know and love”: “limited capacity of working memory… and almost unlimited capacity in long term! ps I don’t really like curriculum that much??

LikeLike

[…] Sherrington has shared this incredibly useful set of slides on assessment. A really useful resource for any team to use as a discussion […]

LikeLike

Thanks a million for sharing such useful information…

LikeLike

Brilliant resource to understand what assessment looks like and it’s intent. Thanks for sharing this unique resource for educators.

LikeLike

[…] will always be a distribution of performance and some students will be at the bottom end. (See Understanding Assessment: 20 CPD slides.). The question is how we deal with students at the lower end of the range. I’ve raged about […]

LikeLike