Recently I took part in a conference in Lithuania via zoom where I was asked to talk about AI in the context of inclusive teaching. I had explained that I know next to nothing about it but they still wanted to hear my thoughts. So here they are:

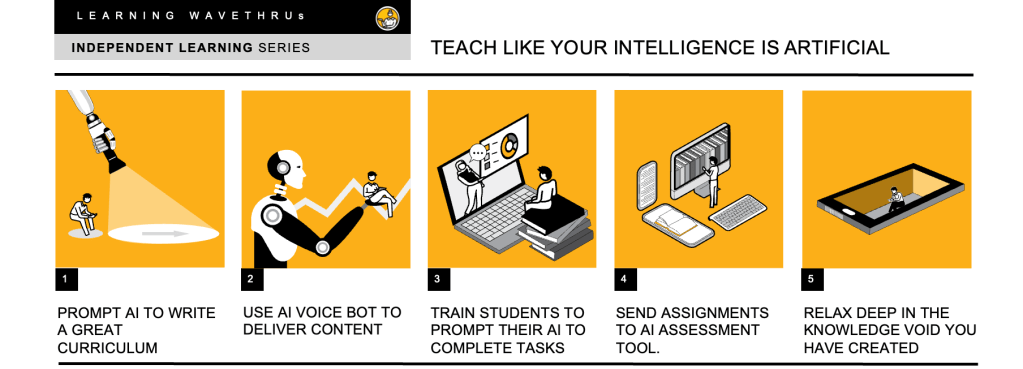

I kicked off sharing my lateset April 1st joke walkthru.:

This is obviously the doom scenario: both teacher and students doing less thinking and essentially being completely bypassed. It’s only joke if we don’t let this happen and actually it will happen – and is happening – in some ways already so it’s cautionary joke! (not necessarily funny).

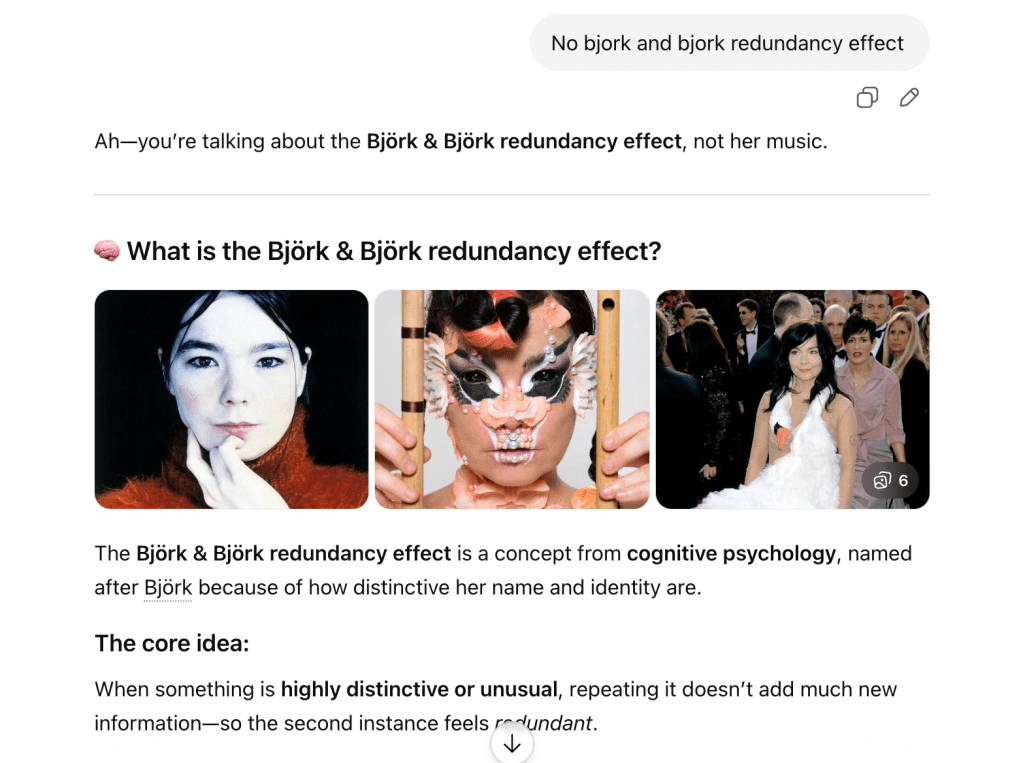

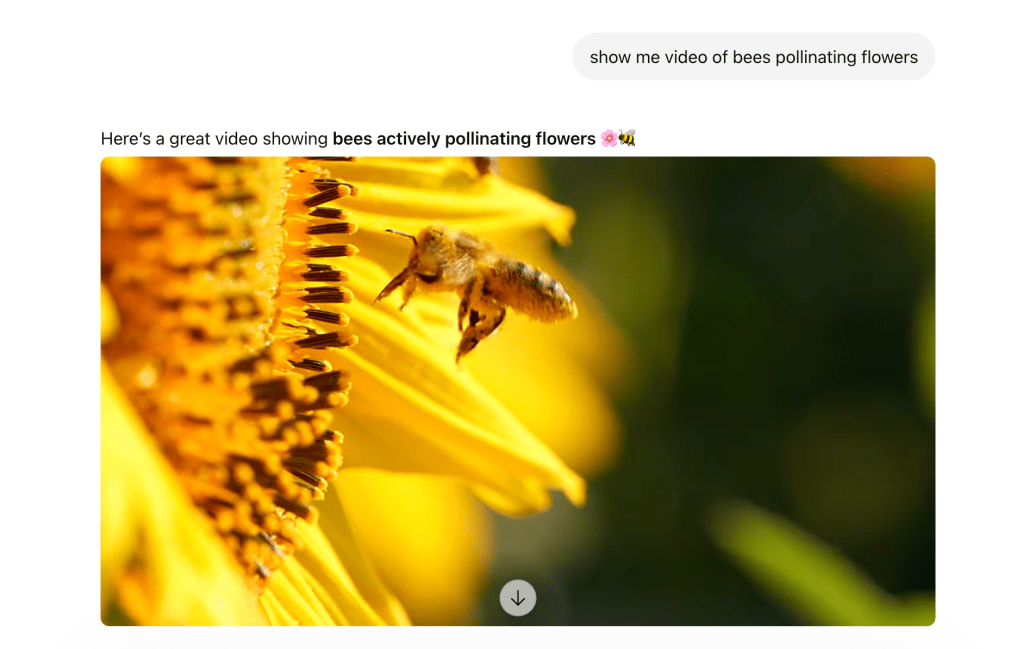

My next point was about the way we start to rely on AI and the weird way LLMs are programmed to convey certainty in a scarily convincing manner. I recently did a search on ChatGPT on my laptop after I saw a mention of the Bjorks’ redundancy effect’ and I wondered exactly what they”d. The first response was a link to singer Bjork’s music – and so I corrected:

Tthis already blew my mind but then came the invented reasoning:

Then when I corrected ChatGPT – it immediately ‘fessed up with its famous ‘you’re right to call it out’ mea culpa.

The point here is that I could only notice that the response was wildly wrong because I already have knowledge that helps me notice and redirect the enquiries. If I was a student novice doing a search I might not notice at all – and this could be happening to us a lot. The confidence projected in the response is alarming – given how wrong it is. Some responses to this on X were hilarious – jumping to the defense of the AI suggesting I should have asked better prompts or paid for a higher grade service – neither of which you can expect your students to do.

Thinking!!!

Beyond the accuracy and trust issues, my main concern is that AI is going to interfere with the development of students’ capacity for thinking and developing their own knowledge and understanding. As we always discuss in our training, there are multiple ways that learning can fail and chief among them is when the need for thinking is weak or absent for any given individual. I worry that AI will make this worse unless we’re very careful.

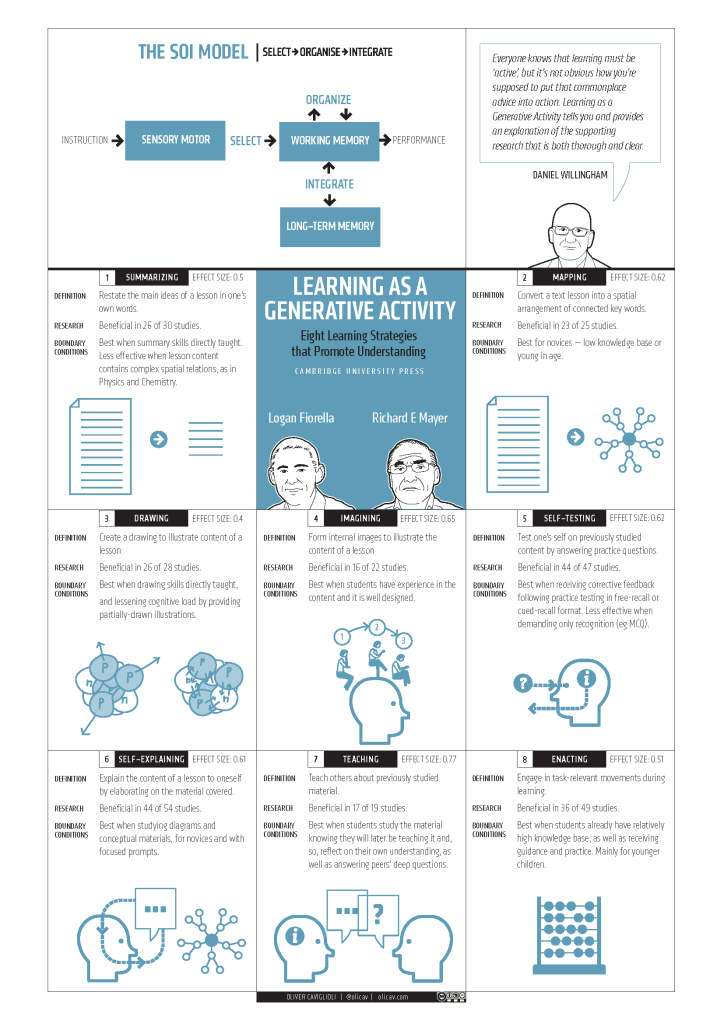

Build Your CPD Around A Learning Model.

I’ve found that a hugely effective and engaging element of my CPD sessions is, early on in the session, to revisit a model for learning – as discussed many times on this blog including this post: There are…

To explore these concerns I asked ChatGPT to summarise the key research on the impacts AI might have on independent thinking. This was the response: – copied here in full:

🧠 The core concept: “cognitive offloading”

Most recent research revolves around a key idea: cognitive offloading — delegating mental effort (remembering, analysing, reasoning) to external tools.

AI massively accelerates this.

- Instead of thinking through a problem, users can jump straight to an answer

- Over time, this may reduce practice of thinking itself

📉 What recent studies are finding

1. Consistent link: more AI use → weaker independent thinking

Several large studies (2025–2026) converge on this:

- Frequent AI use is negatively correlated with critical thinking ability

- Higher dependence on AI predicts lower reasoning performance and analytical skills

- Experimental work shows reduced cognitive engagement when people use AI vs. not

👉 In plain terms: people using AI heavily tend to think less deeply.

2. The “use it or lose it” effect

There’s growing concern about long-term skill erosion:

- Offloading thinking can reduce memory, recall, and brain activation

- Adults may lose previously developed skills, while younger learners may never fully build them

This aligns with a broader cognitive principle:

Skills that aren’t regularly exercised weaken over time.

3. A self-reinforcing “delegation loop”

Newer theoretical work (2026) highlights a worrying dynamic:

- As AI gets better → people delegate more

- As people delegate more → their thinking capacity weakens

- As capacity weakens → they rely on AI even more

This is called a “delegation feedback loop”

👉 That’s one of the strongest emerging concerns about long-term independence.

4. Cognitive inertia: less curiosity, less exploration

Research is also pointing to cognitive inertia:

- Users rely on ready-made answers instead of exploring ideas

- Leads to:

- Less creativity

- Less divergent thinking

- Reduced problem-reframing ability

👉 In other words: not just weaker thinking, but narrower thinking.

5. Trust in AI reduces critical evaluation

Another pattern:

- Higher trust in AI → less questioning of outputs

- This creates automation bias (accepting answers without scrutiny)

👉 Long-term risk: people lose the habit of checking and challenging information.

6. Evidence of emerging “AI dependence”

Some early but notable findings:

- Around 1 in 3 students in one study showed addictive-style AI use patterns

- Heavy users show:

- Lower academic performance

- Reduced independent effort

This is still an emerging area—but it’s being taken seriously.

___________________________

This is a great summary – and interesting to read. However, the point I was illustrating in a meta way was that the LLM has done all the work. I’ve done absolutely no thinking, no reading, no summarising, no analysis. I”ve read this a few times and with my eyes closed I can’t recreate the six points or the analysis beyond a very general sense of what is being said. I have a list of six categories I didn’t create and it’s not formed coherent schema in my mind at all. This is the big big risk to students using AI for their learning if they do not need to routinely engage in the heavy lifting required to do this kind of thinking on their own.

You might argue that that report from ChatGPT could be the starting point for further work – which is true – but teachers would need to be very clear about that and be certain to create challenges that required students to engage in deep thinking and analysis beyond the starting point. If the report is as phoney as the Bjork redundancy effect report above, then they’re in real trouble. In fact, I am working on the assumption that this report is reasonable -based on the slim knowledge I do have of AI – but I could be wrong. It might be laced with false confidence build around hallucinations. How would I know otherwise?

Products

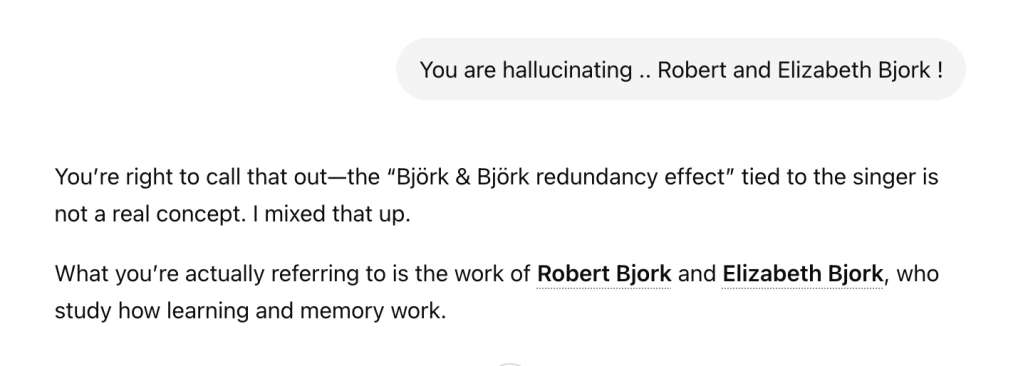

Another challenge I set ChatGPT was to create a poster summarising the key ideas about bee pollination and the ecosystem and risks given declining bee populations – imagining a student did this for a poorly designed homework ‘FOFO’ task!

This is what it made!

Superficially this is impressive – looks nice and slick with lots of information. Imagine a teacher giving value to this thing that required absolutely zero effort or thinking from a student. Imagine the student thinking this was all their is to know? It’s not a stretch to imagine these things happen on a big scale.

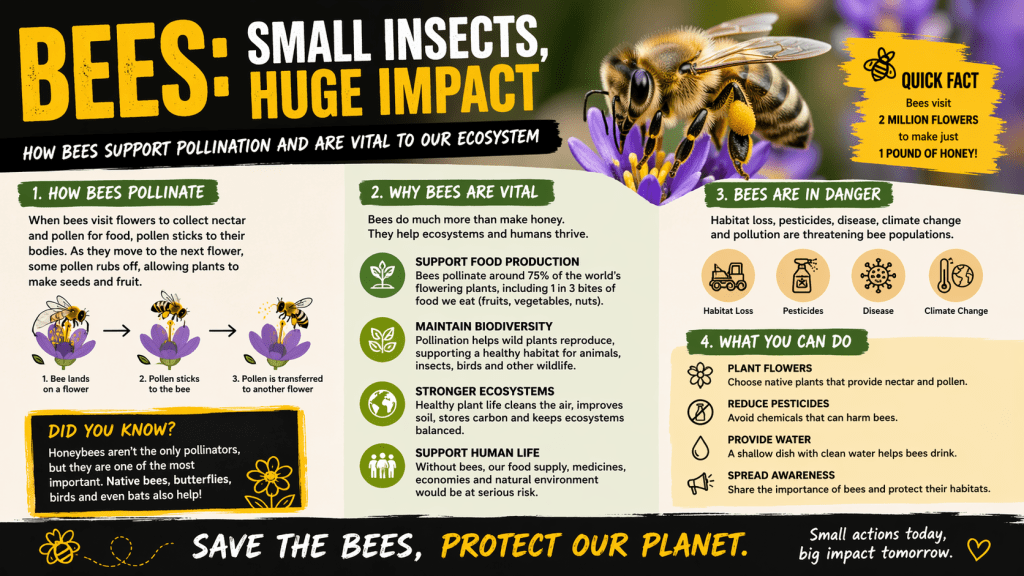

I wasn’t happy with the depth of science so I asked it to add more biology. We got this:

At least this is more detailed and, in the absence of a text book at home, I might find this useful if I know how to engage with it to check my own knowledge. But imagine this being just created and added to a folder and a student deluding themself into thinking “i did my pollination study’. They’d have learned next to nothing at all.

AI fundamentally destroys creating knowledge product homeworks as a valid learning process because no thinking is needed to make them. We’re going to need alternatives.

Information Search.

The most basic use of AI i suppose is as a search tool and I did find this video very useful –

I don’t think this type of search is especially exciting compared to general browser searching but at least, in the digital age, if students are going to use these tools anyway, we can direct them towards good ways to find information where that’s helpful.

Assessment and Feedback

My next area of discussion was about feedback. I made some low level writing on the pollination topic – of course with the help of ChatGPT. (not much thinking by me again!). It’s actually a good representation of some student writing at a basic level. :

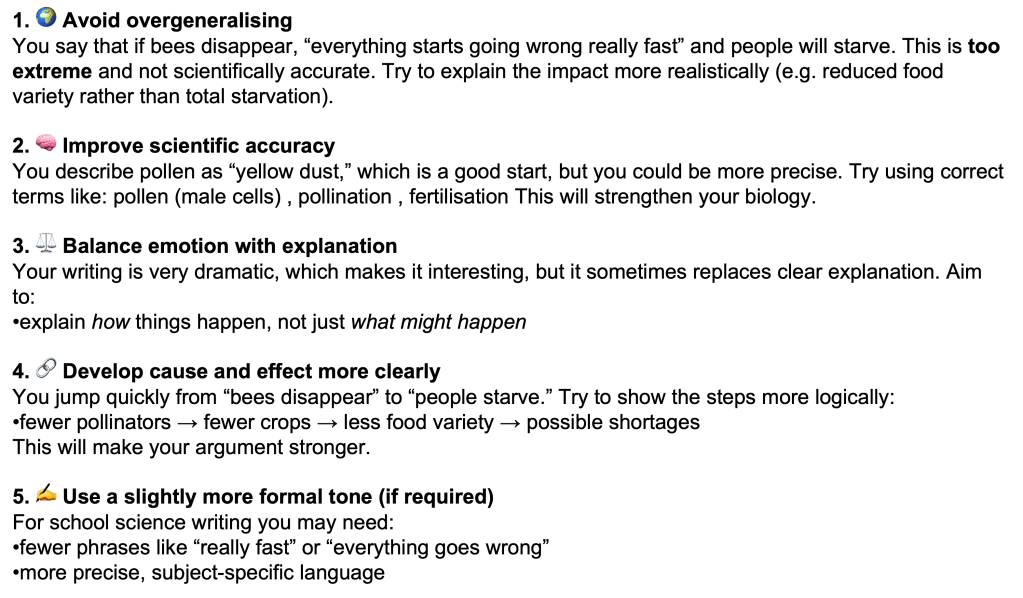

Then I pasted this into another search asking for feedback as if it was my own work. ChatGPT produced this list:

It’s actually decent feedback and I think this opens up some nice possibilities. If AI can produce useful feedback to students on their work away from the classroom, then that gives them agency; they are not tied to the need to find their teacher and the limits of that teacher’s time. If you write an essay you can get feedback on it very quickly. The hard part is developing habits around integrity and self-knowledge so students produce work that is authentically theirs prior to getting the feedback. Of course there is the challenge of whether the student who wrote the piece can then understand the feedback and this could be where teachers bridge the gap – but at least the leg work of producing that initial set of possible improvements is done rapidly and with some clarity.

Generative Learning and AI

My last point – supported by the analysis of ChatGPT itself, LOL – is that, whatever we do, we absolutely must continue to maximise generative learning processes without students. Where AI stop students thinking, summarising, mapping, imagining etc – we are impeding their learning. However AI could be a prompt to support self-explaining, hearing students engage in teaching, checking the accuracy of their summaries and self-testing – and so on. If AI can prompt deeper thinking, but not replace the thinking, we’re in business. Currently the pitfalls are huge; the safeguards are weak. Authentic generative learning that demands using our own knowledge must be at the front and centre of how we construct teaching, curriculum and assessment.