Here’s my annual twitter joke for GCSE results day:

DfE media guidance. Response to GCSE outcomes:

- If results have gone up: This is evidence of policy raising standards.

- If results have gone down: This is evidence of policy raising standards.

It’s a clever trick – but it’s not actually wrong. A case could be made for both statements being true. We can talk about ‘raising standards’ to mean setting higher benchmarks for the standards we expect or we can talk about the standards that have been met – but they might not be the same thing at all. Nobody is better at maths simply because they sat harder exams – especially if they failed it.

I am a supporter of the exam and curriculum reforms in general but the truth is that our education system is permeated by confusion (and a fair degree of ignorance) about what standards are, how they are measured and what GCSE grades tell us.

Evidence of the toughness comes from the marks. You needed only 52% on Higher Maths to gain a Grade 7 – directly equivalent to an old A. That’s seems very low – meaning that the exam was hard. So, with this year’s results, we now have a very clear indication that grades do not tell us about the relative difficulty of the assessment, the standards in an absolute sense – the challenge of the material being studied. Primarily they mark positions on the bell curve. The test was harder but there are still always 50% of students in the top half! It’s not literally that simple but it’s close. The need to ensure that this year’s students were not disadvantaged by changes – the fairness principle – has driven a very strong process to keep the bell curve markers in the same place. But that makes it pretty confusing.

So here is the big question for me: After this year, how will we know if standards of student performance in English and Maths have gone up? It is likely that the grading machinery will keep things very stable. Should we look to see if marks on similar papers increase or will be just associate higher raw marks with the exams having been made easier? The same merry-go-round. The DFE and Ofqual will need to communicate this well. Are we going to allow some grade inflation to creep back in so that we can all believe standards of student performance are rising? Will the reference testing process be given more publicity so we all know how it feeds into the whole machine?

Competing demands on measurements

It’s an interesting challenge to understand the examination grading process; it’s an even bigger one to communicate it to students and parents. We want educational outcomes to be measurable so we we know how well children have done. But we’re so deeply conflicted about whether this means recording what they know and can do – or measuring how well they did compared to everyone else.

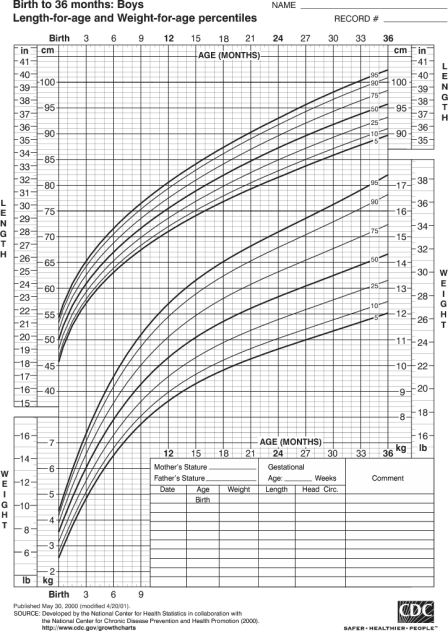

These charts are familiar to any parent. They contain both types of information: absolute and comparative. It’s more meaningful to tell a parent that their child is in the 50th percentile than it is to say, nothing to worry about Ms Smith, your child’s length is 65 centimetres. Why? Because 65 cm could be either very high or very low depending on the child’s exact age; it’s only the comparative measure that is useful. Educational measurements are very similar.

As I’ve explored in various posts – including Assessment, Standards and The Bell Curve, there are very few educational measures that are truly absolute: we almost always need to refer to the bell-curve if we want to base our ideas about standards around the notion of difficulty.

One of our problems is the widely held sense that any awards we give are cheapened if too many people get them; if they appear freely available. We want everyone to do well, but as soon as too many people start to succeed, the shout out isn’t one of celebration – it’s the hand wringing of standards being watered down. This isn’t necessarily unfounded. The extent of grade inflation in the 90s and 00s was certainly not matched by a parallel rise in the fundamental levels of educational performance of our young people. Separating real improvement from inflationary improvement is a core technical issue for examination systems everywhere. (Reference tests are part of the solution -these are happening in the background but we don’t get told much about them).

Another problem is our strong commitment to the idea of passing or failing exams. Discourse around educational standards is littered with references to the pass/fail threshold. For decades the C grade has represented this pass and there has been a right old farce surrounding the Good Pass/Standard Pass issue in the new system. There is no intrinsic reason for any grade to be a pass especially given that, in a norm-referenced system, not everyone can achieve a 4 or above. Nicky Morgan snatched defeat from victory (stupidity from sense), when she immediately branded 5-9 as Good passes in the new system. This was our chance to say: every grade counts; each grade represents learning to different standards that have value for what they are. It would have been perfectly possible to set Grade 5 as a benchmark level for looking at school improvement or a sensible baseline standard for moving onto A levels without needing to call it a pass (and the others a fail.)

This is like making every beginner piano student take Grade 5 – so that large numbers fail – instead of setting standards at different levels that students can pass. If only our examination system was like that. Too late now…. for a decade at least.

The Zero Sum Effect

Another important issue to wrestle with is one I raised in the Bell-Curve Cage post. Despite the rhetoric around the new toughness and the strongly anchored bell-curve, we are still meant to swallow the delusion that all schools are meant to show improvement. As the graph below illustrates explicitly, there are winners and losers. But still we are not allowed to talk in terms of a zero-sum. This is tinfoil-hat territory. But every school that gets a results boost can only do so at the expense of another. That’s what a stable system looks like. And yet….. oh gosh. The fierce, outrageous high-stakes accountability system takes schools to pieces for a decline in standards. How loud do we shout it: WE CAN’T ALL BE ABOVE AVERAGE. WE CAN’T ALL GET BETTER GRADES.

The Challenge of Tiered Exams.

After some of the PiXL experiments with trial papers and boundaries, I was interested to see how this panned out in reality:

The challenge is to create assessments that tackle the spread and allow us to measure across the whole range of outcomes – but exams are not like rulers where you can simply add up the heights.

The two-tier approach is a bit like trying to streamline the ABRSM piano exams into two levels of exam – say the Grade 3 pieces and the Grade 6 exam pieces. Instead of beginners playing Grade 1 pieces and passing, they would do the Grade 3 exam, find it very difficult and get a score that is equated to a Grade 1. Similarly, the top end students don’t take Grade 8, they take Grade 6 and if they absolutely ace it, they are awarded Grade 8; if they struggle with the pieces but make at least some sense of them, they might get a Grade 4. There’s then a messy overlap: doing well on Grade 3 or badly on Grade 6.

Personally, I think it would be better if exams had Core and Extension papers – or if the Higher and Foundation marks were morphed into a single scaled score. In general, I think we should move to scaled scores of say 1-100 instead of grades. The cliff edges we live with are unacceptable really. Sadly there’s no chance of that ever happening.

The most important thing now is for there to be a moratorium on change. Arguably the process of implementing all the reforms over the last five years, whilst potentially allowing standards to rise in the future, has prevented us from actually raising standards overall so far. There is no evidence that our education system as a whole is delivering higher standards now in 2017 than we had in 2010. Part of the reason for that is that too much emphasis is placed on pieces of the system – school structures, a few celebrated new schools, a few top-end success stories, the cult of Outstanding etc. We have not been sufficiently focused on how to make students better at maths, better at English, better at science. Better in the sense that they know more; truly better in absolute terms.

This is about developing leaders, developing teachers and creating the conditions that motivate people to stay in the job long enough to make a sustained impact. I think the assessment paradigm shift I discuss here is part of it too. Let’s see if that’s the emphasis now the reform storm has nearly passed.

Reblogged this on DT & Engineering Teaching Resources and commented:

Standards?! What’s going on with GCSE grades?

LikeLike

Grades are meant to check the level of learning for particular learning goals. Grades never say how much intelligent in totally. E.g. Student who performed well in chemical kinetics can score less in organic due to the multitude of different reasons. Grades values imply, what taught, not taught, what learned, not learned, what memorized and not memorized IN ACCORD with the type of questions on the exams. Also, there is so much of difference in the type of distribution of marks in different subjects. Take the example of math and physical education. Most students opt physical education than other low scoring subjects in India, as it helps them to score more. This leads to mark inflation of these students. Better we have a system that TRUELY says that you have got credits for reaching this learning goal.

e.g.

Your learning goal is this:

Your score for each learning goal is this.

http://technoperiod.blogspot.in/2017/02/personalized-learning-goals-new.html

LikeLike

Hi Tom

Why do we enter students who have two years of compulsory education left into national high stakes competitive and comparative testing at all? We are the only education system in the developed world to do this. Is it to ration access to Level 3 courses? Is it to provide a comparative accountability measure for all schools including those who do not have post 16 provision?

It does not in my view allow us to determine the ‘brightest students’. They are more effectively defined by the numbers who get first class degrees. Where is the research that correlates first class degrees with GCSE grades?

This annual smoke and mirrors show has become more detached from the reality of what 16 year olds know, understand and can do by the reintroduction of norm referencing by means of comparable outcomes, the notion that the reformed GCSEs will be ‘more challenging’ and the farce of a ‘strong’ and ‘standard’ pass.

LikeLiked by 1 person

[…] Standards?! What’s going on with GCSE grades? […]

LikeLike

[…] the answers aren’t easy. Tom Sherrington discusses the issue with as much clarity as one could here. But when we look at the increasing cost to those on the front line, us, and to the statistically […]

LikeLike