My recent keynote for ResearchEd in Leicester examined the ideas around being evidence-informed. This pulled together ideas I’ve explored in a number of previous posts. Here’s an attempt to convey the gist:

Learning happens in numerous contexts. Classrooms are complex places with multiple factors that contribute to learning. It’s natural and important that we should seek to understand how that all works – including what doesn’t work.

Does it matter if teachers have a secure model for a learning process? Yes it does. Otherwise they end up doing things that are ineffective – I have seen many examples of poor lesson activities that stemmed from the teacher’s dodgy model for learning. This includes retrieval routines that somehow replaced the actual teaching bit.

Being evidence-informed is important – but there are two ways of thinking of this. The first is ‘Trials and Studies’. This approach is taken by the EEF and other bodies; studies into specific areas of activity are undertaken and measures of impact are made – alongside costs and other factors.

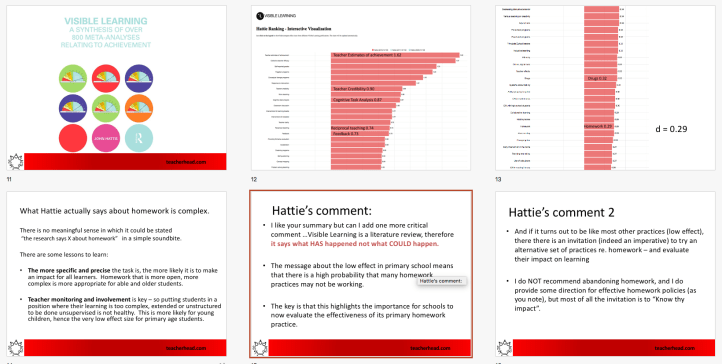

This approach informed Hattie’s famous Visible Learning work that dominated discourse in the early 2010s -until people challenged the whole notion of ‘effect size’. If you can put ‘Drugs’ on a list of inputs -immediately we need to know which drugs, in which doses, for what purpose, with which students etc.. (It’s about ADHD). But the same must also apply to ‘Homework’ – all the variables need exploration. I explored this in detail in a 2012 blog which Hattie commented on. He’s clear that his research only shows what has happened, not what could happen.. such an important distinction. There is no absolute truth about homework’s effectiveness without detailed context.

Homework: What does the Hattie research actually say?

This is an excellent book. It is an attempt to distil the key messages from the vast array of studies that have been undertaken across…

Another example is the EEF trial into growth mindset interventions in 2019 which ‘did not find any evidence of impact’. This could be because the concept is flawed and ‘does not work’ but if you look at the methodology in the trial – the level of input is so shallow, it would actually be remarkable if any intervention showed an impact of any value in such conditions. So, this raises questions about the validity of doing even large scale trials which are so removed from sustained teacher practices.

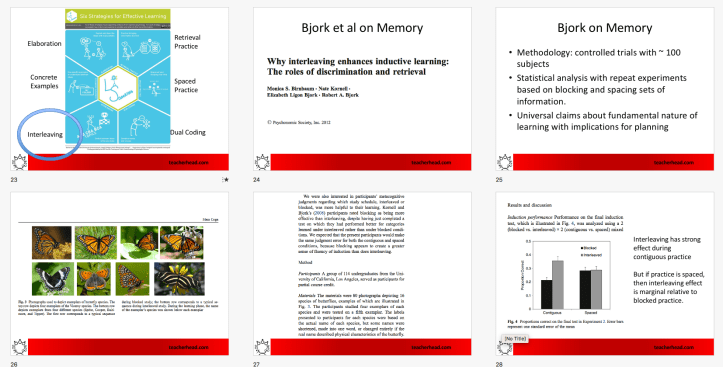

A different type of study is the kind undertaken by researchers such as the Bjorks into concepts such as interleaving. Here, a trial involving 114 undergraduates, learning the categories of butterflies is the basis for some insights into interleaving and spacing practice versus blocked and continuous practice. It is tightly controlled in terms of variables, and yields some insights -but clearly there are then limits to how far you could extend the conclusions beyond the conditions of the trial.

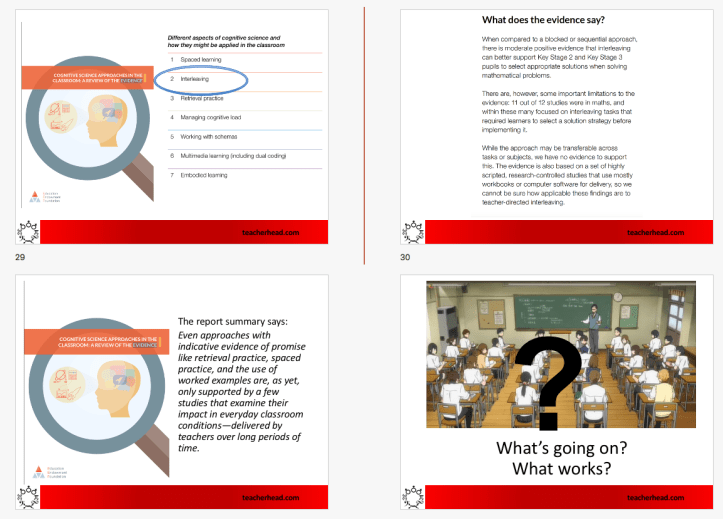

Interleaving is one of the approaches covered in the EEF review of practices based on applying cognitive science. The report suggests that, to-date, there are just not enough trials of this kind – where eg interleaving or spaced practice or retrieval practice – are tested in ‘everyday classroom conditions – delivered by teachers over long periods of time’. So, for some, an ‘insufficient evidence’ cloud hangs over these ideas.

But is that the right way to think of it?

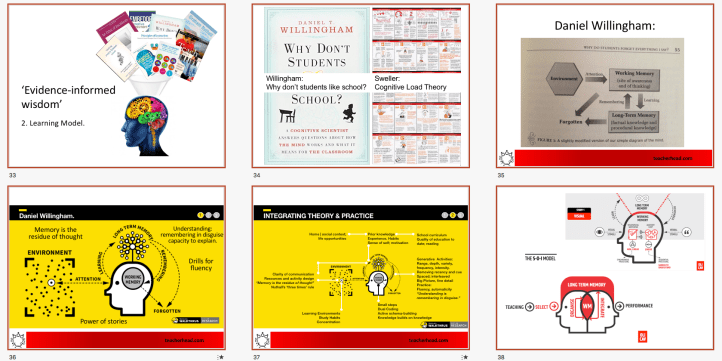

This leads into the second way of thinking about being ‘evidence informed’: The Learning Model. For me, the most useful way to consider evidence about learning is in terms of forming a coherent model for how learning happens in general terms, that can then be applied to numerous situations. Dan Willingham, John Sweller and others have provided us with a simple (not simplistic) schematic model for learning that helps explain how students learn and don’t learn. It’s reinforced by ideas about dual coding and Mayer’s Select-Organise-Integrate SOI model.

A model for the learning process. And why it helps to have one.

One of the most powerful ideas I’ve engaged with recently is using a diagram to visualise a shared model of the learning process; using it to get a feel for how…

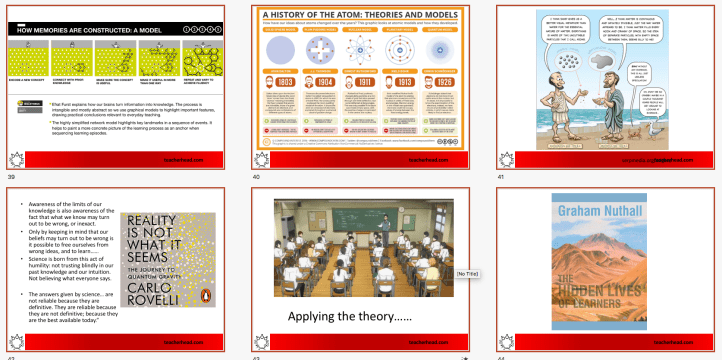

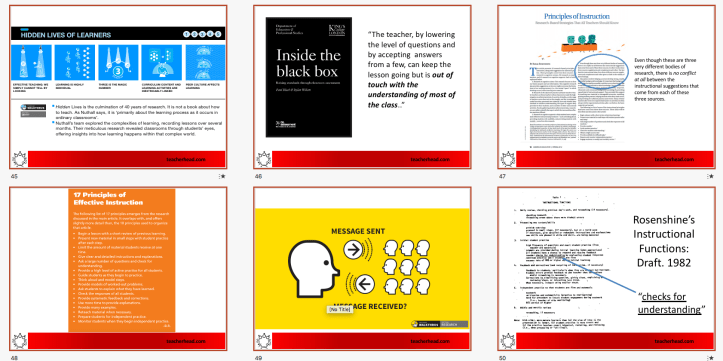

Efrat Furst’s explanation of the memory forming process is brilliant, bringing additional clarity as she distinguishes early rehearsal from subsequent recall. Like all good scientific models, our understanding of learning deepens over time. From Democritus onwards, we’ve refined our ideas about atoms. Rovelli has important messages about the nature of scientific understanding and the nature of uncertainty; the need for humility in questioning our understanding at any given point.

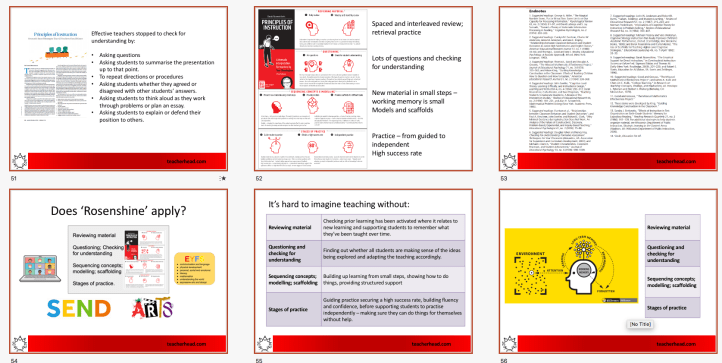

Theory then needs to then be applied and this in-situ exploration is what Nuthall does so successfully in Hidden Lives. He provides insights; not measures.

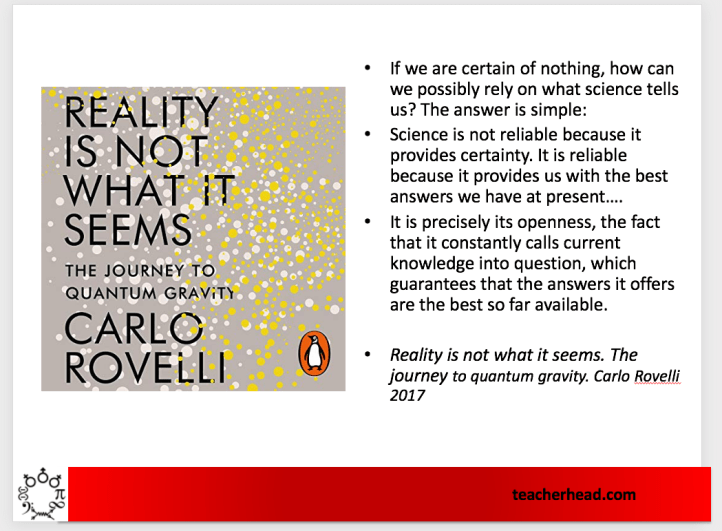

Wiliam and Black’s work in Inside the Black Box and Rosenshine’s Principle of Instruction show how general ideas about learning manifest themselves in classrooms. They explore what teachers actually do when they seem to be successful and explain this in terms of the underpinning learning process. Their insights gain acceptance because they chime with teachers’ reality and because they can be explained.

For me, the ‘evidence informed’ label doesn’t apply to Rosenshine because he proved that his ideas are backed up in any particular studies. I for one have never gone through each of his citations to see his references and suggested readings. I don’t feel I need to. I don’t expect anyone to have tested ‘daily review’ or ‘modelling and scaffolding’ in the specific contexts I inhabit. I have confidence in the ideas because they are rooted in a model of learning that seems secure and can be applied in multiple contexts. We can explain each of the 10 principles (or 17 in their original form) in terms of the model of learning that has been reinforced by numerous cognitive scientists. It makes total sense to me. It’s the whole field of study that provides the evidence; this is what gives us confidence – not some measured effect sizes in a few context-specific trials.

So, that’s my pitch. If we have a general model for learning that we can test out in our own contexts and it seems to have strong explanatory and predictive power, then we trust it. This is far more useful to us than looking at a set of macro trials of specific sets of activities in someone else’s context with measured outcomes that average out the detail and the complexity.

The important thing then is to refine the model continually. That’s how we remain evidence-informed. Once again Rovelli is worth quoting….

I so most whole-heartedly agree with you. The model for learning becomes particularly pertinent for the priorities in English, which Ofsted have emphasised.

LikeLike