When results from public exams and assessments are published, the first response should, of course, be to congratulate students on their achievements. Every student deserves to enjoy their success; they worked hard; their teachers worked hard. Celebrations are due! Even though the circumstances in the last two years have been radically different to any other before, a student who has done all that’s asked of them has a right to feel that their grades have as much value as anyone else’s. Every student is entitled to believe their grades are legitimate, arrived at through a fair process. I hope most students feel that way and have negotiated the progression paths they’ve earned access to.

However, after that pause for celebration, acknowledging the turbulence that students have endured, it should be perfectly ok to discuss the issues surrounding the nature of the assessments we use to award qualifications and to consider the issue of grade inflation. It’s not some kind of mean-spirited denigration of people’s achievements – it’s a technical discussion about the way we determine standards and the value we give to outcomes.

I also think it’s important not to make it a party political issue or consider it as unique to our country. Grade inflation is discussed in Ireland, Sweden, the US, Canada; it’s discussed in relation to Higher Education as well as GCSEs and A levels. Why? Because it’s an inherent issue in any large-scale assessment system: the mechanisms we use to determine the standards that students have reached and maintain them over time are complex. Our attitudes and beliefs around standards and access to qualifications are complex.

It’s not a question of trust in teachers’ professionalism or knowledge – I see it as a major error when people react against concerns about grade inflation by seeking to defend teachers as if their integrity is under attack. This is so common – and it prevents us from having the technical discussions we need. It’s not about cheating. It’s not ‘nonsense’ – as if we can just sweep it away. As I explore in this post, the trust we need is in systems that teacher operate in, not the teachers themselves.

This year, the outcomes have been determined in a way that locks in ‘benefit of the doubt’; the ‘best of three’ effect has fed into teachers’ judgements so a significant increase in top grades was inevitable, especially when any cohort-wide counterbalancing moderation seems to have been negligible. To some this represents a fairer representation of the standards students have reached compared to the exam-dominant system from 2019 and before. That’s hugely debatable in itself but, in any case, it’s important not to confuse this year’s rise with the steady creep of inflation that can happen even when the assessment format is identical year to year.

Why is grade inflation a problem? It matters if we want the grades students receive to have value and credibility – all the grades, not just the ones at the top. Even people who want to dismiss this issue would have a limit. Let’s say the normal pattern is that 25% of grades are A/A* or 7-9 but then it shifts to 30%. Some people will say the usual ‘testament to everyone’s hard work, trust teachers’ stuff and not want to acknowledge the inflation. They will see it as more students having reached the standard, rather than the standard dropping. But what if it then goes to 35%, then 40, 45, 50. At some point everyone commenting on this would reach a point where they’d think – hang on, that’s rather too many people getting the top grades.

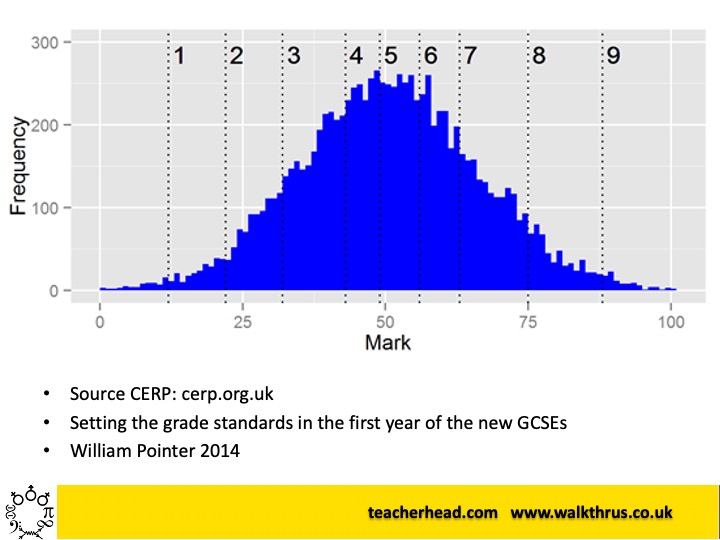

Once people doubt the value of grades, everyone loses. In our system, if we are to have a set of graded outcomes, we need some confidence that a grade means a standard – and like it or not, a bell-curve reference is inherently part of that. In my assessment training I often refer to this curve:

It comes from a trial Maths exam taken before new GCSE rolled out. The point is that the shape of the distribution is a property of the performance of the cohort – some 5000 students who took this test. The 0-100 range is scaled from the original lowest to highest raw scores. This is no conspiracy. The question then is how we use this to determine a set of standards and then award credit for reaching them. The grade boundaries are entirely arbitrary. If we run two sets of assessments with similar large cohorts, and want to align the standards of those assessments, the most secure assumption we have is that the distributions of performance would be similar – so we shift the boundaries to make that happen. We invest in extensive moderation. If we let the number of people achieving the higher grades increase year on year, we can be deluded into thinking standards are rising – ie more students are learning more. In fact, it’s more likely we are letting standards slip because we’re making it easier to get the higher grades. This is such an important distinction and if we conflate, ignore, dismiss…. we have a system that people lose faith in – so they start setting their own extra tests and nobody benefits.

Of course, we need to allow for underlying standards to rise – so it’s not rigid. However the pace of that is likely to be gradual. I remember being told by an Ofqual bigwig that for a whole cohort of Maths GCSE passes at 4+ to rise by 0.7% in one year was ‘pretty generous’; this was in line with evidence from national reference tests that deal with type of problem. That means that rises of 5% or more do not represent standards rising – they mean the test was easier or the grading more generous/lenient – depending on your perspective. This isn’t then about students working hard and getting their rewards – students work hard every year!

I don’t think this should be controversial. What frustrates me is how many people involved in education don’t even seem to understand how the system works in normal times, never mind when we’ve had a two-year aberration where we’re not comparing like with like. I don’t even think ‘inflation’ is the right word for what happened this year – it’s a step-change resulting from using an entirely different system and it means there’s a risk we’ve partially lost track of the underlying standards the grades were originally pegged to. If we kept the teacher assessment system, it would require total recalibration. If we’re going to return to exams next year, we can treat 2020 and 2021 as anomalous years in the trend. However, at some point, we’ll need to reestablish the baseline where people know and understand what constitutes the standards for all the grades and for that we need consistency and minimal change.

Of course, we’re still left with the issue of the ‘forgotten’ third. That’s a big problem. Lower grades have been utterly devalued even though it’s statistically not possible in all likelihood for everyone to ‘pass’ with grade 4 or above. We have the bell curve cage problem. The answer to that lies in finding different ways of measuring success (yes, I mean the National Bacc for example) and investing in the long-run struggle of teaching students more effectively from day one – but it does not lie in trying to force more students higher up the bell-curve by gerrymandering the outcomes. That’s a lose-lose situation.

I do agree with what you have said, and I would love to think that a sense of normality will return. However with having education changes set by people who do not necessarily understand the reasoning behind the bell curve, and then the general public to whom the setting of grade boundaries is inexplicable I do wonder what will actually happen in the next few years!

LikeLike

I think the key aspect that isn’t discussed enough is that relating to grade descriptors vs grading on a curve. What was removed this year was the national standardisation, replaced instead with a system that more explicitly stated ‘if a student matches this descriptor, they will achieve this grade’. This exists in normal years but is more of a charade, with grades actually being too focused on comparisons between students. What the last two years have revealed is that our public examinations are effectively a national ranking exercise.

Now I’m not advocating therefore throwing everything out or focusing solely on teacher assessed grades as this year. However, if we go back to traditional approaches then it should be much more transparent about the comparative element, both in terms of marking for students and how it is subsequently reported on.

LikeLike

In truth exams have always been about comparison – all assessment is. This isn’t a revelation really. Exam boards do use exemplars to check standards year to year around grade boundaries. This year the grade descriptors were issued but actually only really made sense once you got examples together to compare them. You can’t ever make sense of standards without comparison – that’s just how it works.

LikeLike

But why do you think there is such a big shock this year when you take the comparative element away? Would we not prefer a system that simply says ‘if you can do this, it deserves an A’, which is a pretense that we all maintain, when in reality the system says ‘if you’re better than x many people at understanding a concept, you’ll get an A’. It’s this latter concept that allows private schools the leg up that they have, in my opinion.

LikeLike

There is no way to define standards in the way you suggest – ie with ‘can do this’. There are always degrees and comparison is how standards of difficulty or quality are determined. Private schools had a big leg up this year for sure – that’s the truth of it. Exams that everyone can take are more neutral – that’s what the evidence tells us. Some people don’t like that reality.

LikeLike