It’s continually frustrating to me that so much of the discourse in our debates and in the reading of education research reduces teaching and learning effects to crude averages and sweeping assumptions, ignoring complexity and context At the same time, it’s equally frustrating that some people’s response to this complexity is to reinforce a view that ‘anything goes’. Surely a more sensible position would allow us to embrace research evidence about effective teaching whilst also recognising its limits and being extremely cautious about definitions and contexts.

For example, at a recent ResearchEd event, I used the slide above in a talk about Hattie’s meta-analysis, using homework as an example of the pitfalls of interpreting the results. I’ve written about this in full in this post. It was interesting to see, in a ranking of the Visible Learning effect sizes, that Drugs appears – with an effect size of 0.32. Drugs?? 0.32?? Immediately, this leads to a whole host of questions: Which drugs? For which students? In what doses? Over how long? Measured in relation to which kind of learning tasks? … The list of questions is endless. Because we all know that ‘Drugs’ can’t mean one thing, we’re not likely to take that ranking at face value. In turns out, if you read Visible Learning, that this relates to interventions for ADHD. Even with that insight, the same questions apply in querying whether a single effect size would be meaningful.

In that context, Homework, similarly is not a ‘thing’. As I explore in my Hattie post – as he does in his book – it’s a lot more complicated. Effects for primary and secondary are very different on average and, crucially, bigger effects are seen for the more measurable tasks – which doesn’t necessarily mean they are better. Actually, there is a lot of learning from the study – but the numbers don’t really tell the story at all. It’s all in the detail – to the point where the concept of ‘homework’ pretty much falls apart as a coherent definition. It depends on too many variables.

Look at other things in this list. Creativity? Effect size 0.35. How could that be a single entity? Feedback? Effect size 0.73. Straight away, a thousand questions and clarifications are needed before we could discuss these findings – we would need to read the studies in detail – Who? What? How?…. before we embraced the learning from them. As Dylan Wiliam regularly points out, feedback can have negative effects as well as positive; it all depends on what exactly you’re doing and, crucially, it depends on student-teacher relationships.

I would say the same level of caution needs to apply to discussions about things like group work. For sure, some forms of group work are lame and ineffective. I once watched a pitiful ‘Envoys and Experts’ lesson about Henry VIII in which less information was disseminated between students (never mind learned) in an hour than would be possible in five minutes of reading or teacher exposition. ‘Group work’ is not an inherently good thing in itself; nor is it a bad thing. As I explain in detail in this post, it can be necessary and very effective – even if very often it is not. But that doesn’t mean ‘each to their own’ or ‘anything goes’. It means teachers should evaluate whether a particular group-based activity is going to yield good learning outcomes for a particular set of students compared to some other strategy for a particular set of concepts and skills. As I discussed in this recent Context is King post, where you have a class full of sophisticated learners with lots of prior knowledge, you can do things a bit differently to where the opposite characteristics dominate.

Graham Nuthall explores this issue at length in his brilliant book The Hidden Lives of Learners:

“..teaching is about sensitivity and adaptation. It is about adjusting to the here-and-now circumstances of particular students. ….Things that work one day may not work the next day. What can be done quickly with one group has to be taken very slowly with another group. What one student finds easy to understand may confuse another student. ….As a teacher you make adaptations. You must. The important question is: what adaptations to make? You can do it by a kind of blind trial and error, but it would be much better if you knew what kinds of adaptations are needed, and why.”

Another issue that Nuthall reports – as have Wiliam and others – is that teachers are not good at describing what they do. These two effects occur in studies: Different teachers doing the same things in their lessons will describe them as being very different. Also, different teachers doing very different things in their lessons can describe them as being the same. So, to use a recent example, you might be picking up on a wave of interest about explicit knowledge and providing your students with knowledge organisers. You might self-report that ‘yes, I’m using knowledge organisers’. However, in the school or even classroom next door, you might see that it is the routine of daily review and self-quizzing that is yielding returns based on their knowledge organisers – something you are not doing. You might think you are ‘doing knowledge organisers’ but your version is massively ineffective compared to the more intense approach next door.

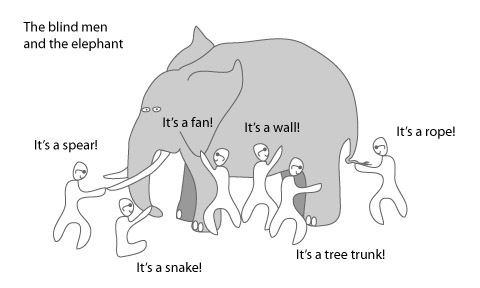

Then there is the describing the Elephant syndrome:

Self explanatory? Here’s the same idea in a different format:

I can’t help thinking that, in the important and helpful debate about progressive and traditional approaches to education, whilst we might have different orientations, a high proportion of what actually happens is the same in practice. If you walked at random into lessons across the land, I would guess that 90% of the time you’re going to find a teacher at the front explaining things to students or students sitting quietly, heads down doing some work. You might want to pull those yellow and red Venn shapes further apart – but the overlap is huge. This why some people resist or reject the debate altogether.

I’ve found my own way to rationalise it by thinking about sequence and context – in this post – and diagram. The central role of explicit knowledge instruction is clear and I would say that, generally, this needs to be strengthened in our thinking about teaching and learning and assessment. However, it needs to be put in a wider context.

I find the Mode A-Mode B concept is helpful in that regard – with proportions that are contested by everyone I ever discuss this with!

Again – it’s about balance; a breadth of learning experiences and doing things in the right proportions in the moment and over time.

Of course, we have to be cautious about ‘lucky pants’ syndrome – which I borrowed from a comment by Greg Ashman in relation to my co-construction experiments at KEGS. My students did well but he suggested that you might wear your lucky pants during your exams but this does not account for any successes you might have. In other words, spurious casual attribution is a danger we face as we’re heavily influenced by our biases. It is hard to accept that a strategy you have invested time in might not have worked or might not be the reason for success.

Possibly above all, beyond what you do – it is how well you do it. That might go without saying but I’d suggest that, where teaching is ineffective, it is less about the selection of strategies and more about problems with confidence, subject knowledge, the pitch of the content and expectations of students. Very occasionally, a really great teacher picks a duff strategy – but that’s not going to kill anyone.

[…] Context and complexity in ed-research and debate, by Tom […]

LikeLike

Great post!

I too take issue with the simplicity of ed research. It often misses vital questions: what is the outcome we’re measuring? Is that a worthy outcome? What are the costs of this outcome? Is it morally or ethically appropriate?

As someone who was on ADHD drugs for my teenage years and early adulthood, I’ve experienced first hand the costs of being required to take medication for the benefit of my school and parents. So, saying drugs has a positive effect misses out on a number of ethical questions. It probably helped the class, but severely stunted my emotional development and conscious experience of life.

If we really wanted to increase the effectiveness of our teaching, why not ship out all pupils with SEND or behavioural issues? What about teaching every child 1:1 as it’s clearly the way the richest pupils get great grades. Just because methods are effective for one measure, doesn’t mean they’re ethical or are effective by alternative measures.

Again, I really enjoyed reading this, thanks!

LikeLiked by 1 person

Thanks just stumbled on your post. I totally agree about looking at the studies in detail. I’ve started looking at Hattie’s influences and reading the meta-analyses he used. I’m amazed at what i’ve found – see class size here – https://visablelearning.blogspot.com.au/p/class-size.html

I am looking for others to add if they have time.

LikeLiked by 1 person

Hattie plus point: the first comprehensive attempt to bring together the world’s research in education and put it all on one scale – effect-size.

Hattie minus point: Books like ‘Visible Learning’ don’t tell you how to do the method and his later books seem more like his personal interpretation.

Geoff’ Petty’s ‘Evidence based teaching’ and the Marzano/Dean ‘Classroom instruction that works’ together with EEF website are all more helpful in how to apply the evidence.

LikeLike

Mike you and Geoff Petty in your consultancy on http://ebtn.org.uk/ never deal with the problems brought up by Tom above, or the many other mistakes and misrepresentation of studies by, Hattie, the EEF or Marzano, etc.

Recently Andrew Old has shown the many mistakes of the EEF in the ability grouping analysis – https://teachingbattleground.wordpress.com/2018/04/02/the-eef-were-even-more-wrong-about-ability-grouping-than-i-realised/

Also, Greg Ashman has shown the issues with the EEF -https://gregashman.wordpress.com/2018/04/17/the-article-that-englands-chartered-college-will-not-print/

So what’s the point of promoting the implementation of dodgy research?

LikeLiked by 1 person

[…] A related post is about how this plays out in the way we interpret findings from research: You’re doing it all wrong: context and complexity in Ed-research and debate. […]

LikeLike