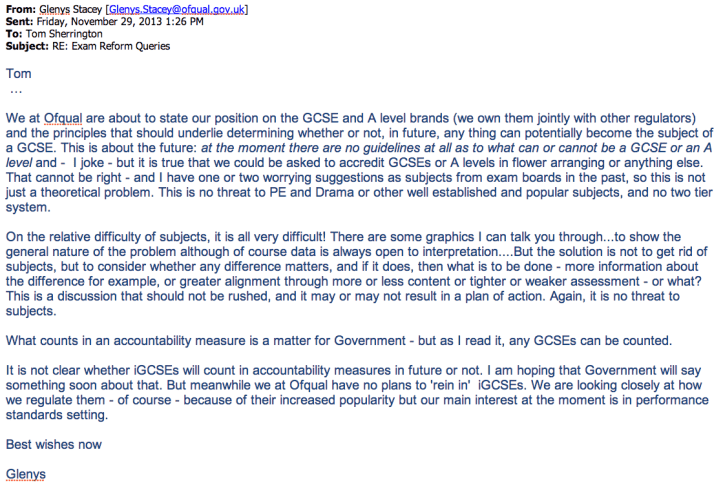

Last summer I had the opportunity to meet Glenys Stacey, Chief Regulator at OfQual. She contacted me after I’d written about exams on my blog and was keen to have an exchange of views. (Another power-of-blogging moment.) I now feel comfortable contacting her directly to ask what is going on with various examination issues. She is very open to this. In the email above, Glenys is responding to a question about ‘soft subjects’ – a media-driven phrase. I was concerned that some of my Headteacher colleagues had got into their heads that PE and Drama were going to be scrapped or down-graded and were talking about changing their curriculum offer. I asked whether this was true and, if so, what the evidence base is for this kind of thinking.

The response is very interesting. Most importantly, there is no such threat to these subjects. However, she does suggest that there is some data that gives rise to concerns in some areas – not necessarily long-established arts subjects. There are different distributions of outcomes between subjects and some modes of assessment are less discriminating than others. Calling them ‘easy’ or ‘difficult’ is too simple. Glenys raises the very interesting question about aligning subjects in terms of modes of assessment or accepting differences and being open about them.

For me, that shifts the onus onto subject associations to work with exam boards to ensure that different disciplines have assessment processes that are demonstrably rigorous and comparable with others. That is the best way to preserve them in the long-term. For example, for the sake of argument, there is no question whatsoever that PE/Drama matter. But does the current method of assessing PE/Drama allow students the best opportunity to show what they can do and is that sufficiently comparable with what happens in English, Music or History? That’s the question – not whether the subject is soft or not.They are not soft. Can we have an intelligent discussion about this without people stomping around the place getting defensive? I’m less confident about that.

This thinking also relates to the issue around Speaking and Listening – as discussed in this post: GCSE Tactics Stick or Twist – and practicals in Sciences. It is well worth reading Glenys Stacey’s blog issues this week (Blogging! It is the best way to get your ideas across.)

This raises a really important question: Does it enhance or diminish the value and integrity of some modes of learning if they are included in the formal assessment for national qualifications? As a science teacher, I think the move to remove direct practical skills assessment from GCSEs and A levels is an excellent idea. I already enjoy this with the AQA Science Certificates (iGCSEs) we use at KS4. Practical work has become part of the learning experience but isn’t morphed into a crazy hoop-jump as was the case with the ISAs we did before. No real science is like an ISA and in any case, the range of marks was tiny; it’s a bad assessment, leading to knife-edge grade boundaries. We do more practical work than ever because it is integral and organic – not associated with formal assessment. The same would be true at A level. I’m comfortable with an exam about practical skills; I’m not worried that students’ skills won’t be directly assessed.

I think a similar case can be made for Speaking and Listening – even though many people feel deeply passionate about the value of Speaking and Listening in the English curriculum (you must read the comments below the GCSE Stick or Twist post...remarkable) I still don’t believe it is made any less important because it is unshackled from being formally assessed. In any case the process has become corrupted at a national level.. and I can’t see that genie going back in the bottle.

Further Thoughts:

I met some Headteacher colleagues this week who were still talking the language of conspiracy around grade boundaries, early entry and the like. I think we need to refresh our understanding of these matters:

1) Grade boundaries always shift; they need to in order have any sense of what standards mean. I’ve explained this in my Bell-Curve post. It isn’t legitimate to suggest that grade boundaries, year to year, have been politically motivated or that, in some way, they run rough-shod over students’ work. That simply doesn’t happen in any practical real sense. These decisions are taken by technocratic folk looking at data- pure and simple. You can’t merely look at a paper and say that it is the same standard as another – you need to see how well people do; if the national cohort does much better on a paper, it means it was easier – even if it didn’t look like it on the face of it.

2) Early entry and multiple entry skews the data-set on which all grades are based. That needs to be recognised. It wasn’t ever a neutral decision to take public exams multiple times, as if each sitting is independent. It troubles me that schools have regarded public exams as a neat way to do interim assessments -as with the use of Core Science exams as a way to determine whether students to Double/Triple. These assessments used to be called ‘Mock Exams’. Teachers should have the skills to set their own exams and determine sensible grade boundaries to inform their decision rather than creating additional skew effects on the entire system. So, although the timing of the announcement in September was crass… the removal of multiple-entry incentives for schools should be welcomed. If students need several attempts, that’s one thing – but let’s not continue this talk of conspiracy around this. It wasn’t cheating but it was definitely distorting the system and that didn’t do us any good at all.

3) The big weaknesses in the system remain the quality and consistency of marking and, to some extent, the design of exam papers. It is good to see that some action is being taken to strengthen these things and, perhaps, by having fewer exams in the system altogether, the talent pool will be stretched less thinly than it has been.

Finally, having met and discussed these issues with Glenys Stacey and Amanda Spielman, the Chair of OfQual, I think we need to do more to engage with these discussions. I find that the level of understanding of how exams work is pretty low in the profession. The issues are far more technical than political and it’s about time more people recognised that. OfQual certainly operate within a broad political environment as we all do but Michael Gove does not have a direct line to OfQual to get them to do things. There are some communication and timing issues -mistakes have been made there for sure – but, as is usually the case, this is more likely a cock-up than conspiracy.

On the back of the English GCSE grading problems of a couple of years ago Glenys Stacey requested to visit our school to discuss issues and how to move forward and I have had several communications with her about general exam related issues since. I have found her open and, perhaps disarmingly honest, about some of the challenges that OFQUAL face.

I’d been discussing the issues around comparability of subjects with her – initially because our MFL team noted that, year on year, Raise on-line ‘shows’ that (on average) students get half a grade lower grades in German and French than those exact same students do in their other subjects. With comparable outcomes being used this ‘harshness’ is effectively locked in forever.

In an ideal world a grade A (or grade C or grade E or whatever other grade you pick) should represent the same level of achievement irrespective of the subject being studied. There should be no easier or harder subjects. On average each subject should be equally difficult (obviously for individual students that will not be the case but given enough students the averages should be).

Glenys kindly invited me to OFQUAL HQ to meet her and Cath Jadhav (Director of Standards) to discuss the issue further. It was a really useful meeting and I got the chance to look at some of those charts that I think she was mentioning to you Tom. There really is a noticable difference in outcomes between KS2-KS4 progress across subjects – whether this should be called ‘easiness’ or not I am not sure.

My reading of the charts was that sometimes a single subject might prove to be “harder” to get the top grades but “easier” to get the lower grades – a general “scrunching together” or grades in the middle with fewer top or low grades awarded.

One thing that struck me was that OFQUAL could, probably, sort this by drawing a line in the sand and then doing some statistical jiggery-pockery for the next year to align the subjects much more closely.

Note this wouldn’t mean the same proportion of students getting A*-C in all subjects as some subjects tend to have more able catchments (based on KS2 results) and others less able. What is would mean is that, when averaged across the tens of thousands of students, those taking subject X would, on average gain the same grade as their other subjects.

Of course any suggestion that OFQUAL should use statistics to manipulate grade boundaries across a range of subjects in this way would be likely to cause uproar as results in some subjects would dive downwards nationally.

It could be argued that, with the new GCSE grading and specifications coming in this presents an ideal opportunity to get the degree of difficulty between subjects aligned. But, of course, it’s not possible to do that with any degree of accuracy until student marks from exam papers are in during that 1st summer.

For me the crucial change is that the new school accountability measures (KS2-4) are based on the actual subjects students have studied.

It is proposed, although the DfE are still working on the details of it, that each student gets a target points score that is based on their particular list of subjects being taken. These targets will be based on the actual KS2-4 progress for equally able students in those exact same subjects being studied (this data actually already exists through FFT A chance tables).

If students take “easier” subjects then their target points score is a little higher than if they took ‘harder’ subjects. If the DfE can make this work then we are genuinely looking at how well those students have been taught in the subjects taken rather than being equally dependent upon whether the school pushes students towards certain subjects or certain qualification types that have easier KS2-4 progression.

LikeLike

Thanks Steve. I agree entirely with what you are saying here – and probably more people should have access to the information you’ve seen that gives the full picture. The statistical work behind the scenes should be more widely shared – I think it would build confidence. A lot of commentary has conspiracy theory flavour when,in fact, the evidence of a range of issues is there to be seen. Thanks for this very interesting extended comment.

LikeLike

Reblogged this on The Echo Chamber.

LikeLike

[…] http://headguruteacher.com/2014/01/11/an-inside-view-of-exams-messages-from-the-chief-regulator/ […]

LikeLike