After announcements about new proposals for KS2 assessment, the issues around relative and absolute standards are getting a working over. Director of The Institute of Education, Chris Husbands, has written a thoughtful blog on some of the issues. I’ve had personal reasons to engage recently as the parent of a Year 6 student who has just received his SATs results. The playground and school gate are buzzing with ‘who got what?’

My feeling is this. Firstly we need to hold on to the notion a broad education where only certain aspects can be assessed. We need to give value to the learning experience in all its diverse glory, going far far beyond what can be measured in tests. There are ways of doing this… but that’s another post. However, at the same time, we need to recognise that formal assessment is an important element in the big picture. Here we need an honest re-assessment of what we mean by standards; we need to face the reality that absolute standards are very difficult to isolate from a deep-seated devotion to the bell-curve.

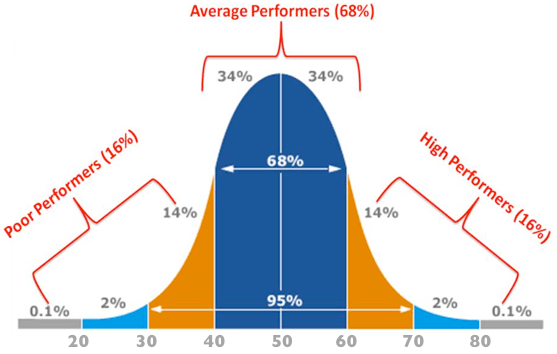

Of course we should also acknowledge the limitations a bell-curve philosophy imposes on us. Some MPs and newspaper editors don’t seem to understand that “Shock!! HALF of all students are BELOW AVERAGE!!” is a joke. With the trailed idea of placing children in deciles after KS2 tests, we are likely to see “Disgrace! Still only 10% of students are progressing to the top decile!!” I predict that this will happen. It will.

Similarly, politicians need to get their sums right. There is a fairly profound schizophrenia that leads to people jumping up and down about standards slipping because too many people gain As and A*s at GCSE. This is ‘proof’ that standards are falling. At the same time, there is some kind of outcry that students reaching varying degrees of Level 4 at KS2, then go on to reach different standards at GCSE:

This shock news that students with lower attainment at KS2 subsequently also gain fewer GCSEs on average… is worrying. Don’t they understand how the system works? If all these L4s actually did turn into 5 A*-Cs with English and Maths, the shouts of ‘dumbing down’ would drown out the cheers of celebration – by the same people. There’s not enough room up that end of the bell-curve.

However, before we go all Watergate, Grassy Knoll, Area 54 on all of this, let’s examine our own sense of what we mean by standards. We can all acknowledge that marking extended writing is a messy business. We can give an impression mark using some criteria; we can count up some definable features – we can give a score out of 30 referencing some exemplars. All of this is complex. Try getting two or three people to agree the mark or level on 10 pieces of work… then scale that up to 2000 markers and see the scale of the issue. What is a Level 4A? How do you measure it? On a comprehension paper, if the marks vary from one year to another, how do we know whether it was a hard paper or a less successful cohort of students? We need reference points and these generally arise from the cohort. It is a safer bet that each cohort has a similar ability profile year on year than the tests we set each year are of the same standard – especially on a national scale.

Now let’s look at something that is purely objective. The High Jump. Imagine 1000 children being asked to do the high jump – give them a few attempts to get their personal best. There is no limit imposed on them. Imagine John goes home and tells Dad “I jumped 120 cm today”. He’s delighted of course… but then asks ‘Was that good? How high did everyone else jump?’ It’s the obvious question. Without any reference point, 120cm means little. It could be very high, average or very low – Dad needs to know what the background profile is in order to gauge his son’s achievement. 190cm is an exceptional jump because of how it compares to everyone else. 50cm is rather poor – but only because almost anyone could jump this high. Of course we can talk about progress, about personal bests, personal triumphs, disability and so on… but every child will also want to know where they stand along the line before their personal achievement makes sense.

The same is true of piano exams. The grades 1 – 8 are chosen at intervals of difficulty that span the typical range of human ability playing the piano. It would be daft to set Grade 1 so high, no-one could pass or so low that anyone could pass. It represents a fair challenge – a standard – for beginners. This is uncontroversial. Even within the cohort of Grade 1 entrants, there is a range. A bell-curve. Some fail, some pass, some get Merit and some get Distinction. These measures are defined with reference to a background spread of ability within what is possible given the pieces and scales (the curriculum) set for Grade 1. There is a good sense of piano exams being absolute standards, but every aspect has an origin in relative standards. As I’ve described in Data Delusion Solutions, piano exams are excellent. In passing Grade 1 – and since 2 and 3 – my son did not feel that he failed Grade 8. He started out at the bottom end of the bell curve and is making his way along at his own pace. The structure of assessment builds confidence at each step whilst remaining challenging continually… but he knows where he stands.

Looking at NC levels, and subject based assessment in general, of course this is still true. We might like the idea of fixed benchmarks that anyone can meet but, in practice, do we really?

- Elif can spell ‘accommodation’

- James can multiply any three digit number by any other

- Hassan can describe the formation of an ox-bow lake

- Louisa can write a coherent paragraph analysing Othello’s character flaws.

- Joshua can work out the nth term of a linear sequence.

- Eni can state which of two compounds is formed via ionic bonding.

These statements of what children can do appear to be absolute. But, if they are used to set standards, they are no use unless we know how difficult they are. So – we ask 1000s of children questions that tease out whether they can do these things and see what we get. If lots of children can do something – we say it is easier than something only a few children can do. Absolute standards only fully gain meaning in reference to a cohort. Of course this is how SATs test work any way. In Maths, tests are marked and scored and the scores are morphed into levels. 6C and 5A are literally just different sections of a bell-curve based on marks on a test.

English is much closer to a criterion referenced assessment process, but when the new tests come in, it will soon emerge that certain scores fit in certain places on the bell curve in much the same way as levels do now. So, unless we change how well children are taught, new tests and new measures are really just cosmetic… we just get rank order described slightly differently and slightly more explicitly.

So – big reality check. Here are some truths about exams.

- Exams are a competition. Not everyone can get the top grades… because outcomes that deviate from a bell-curve do not fit with our values system around excellence. The system continually reacts to changes to ensure outcomes revert to the bell-curve; it’s a strong feedback loop.

- Assessment is fuzzy-edged. Levels and GCSE grades cannot be assessed accurately enough to withstand the scrutiny they are placed under. Every year, students from across the same national cohort will get different grades for work of the same standard – it is inevitable and unavoidable, especially in subjects where examiners need to reference success criteria.

- Grade inflation is systematic if examiners are asked to give candidates the benefit of the doubt on the 2% tolerance for grade boundaries. We either accept it or stop it dead by fixing grades.

- In any cohort of a large size, students given a test which doesn’t place limits on achievement at the top, will generate outcomes that are very close to a bell-curve based on raw data. Turning this data into NC levels or GCSE grades is a distortion of the raw data and any notion that a grade or level describes specific learning is wrong. Students with the same level or grade will be able to do different things; the link is not the nature of what they know, but their position on the bell curve.

- Absolute standards can go up over time. The whole nation could get better at maths and reading. However, in our current system, the exam boards and OfQual have too many reasons to resist giving that impression.. there is too much evidence that standards are not improving; too many examples of accountability driven outcome improvements that aren’t driven by genuine cognitive development and, consequently, the bell-curves are being held firmly in place. I think we should be glad about that at the moment because, otherwise, the gaming machinery will win the day.

Finally, – before I go on for ever…let’s look at ranking. People have been getting worked up about the idea of giving students a decile ranking. It feels wrong… but I’d argue that telling a student they are in Decile 3 or Decile 9 isn’t far off saying 6C or 3B. Either way you know where you stand.. and the process for determining them is pretty much the same – you take a test and see how you did compared to everyone else nationally.

If this does get introduced we need to work Growth Mindset thinking into our practice regardless. Here is a ray of hope. At KEGS, students gain a place based on their rank order on our entrance exam. They know their rank order because this affects several schools that use the same test. However, we use this image to reassure them (and warn them) that rank order means little after a couple of years.

Our intake is taken from the far right-hand end of the bell curve… Here, there is signficant statistical fluctuation so on any given test over time, rank order would vary. However, it is a good message to give our students that they could be first or last when they started – it is still all to play for as they move through the school. The same will apply to deciles.. we can’t allow them to become a barrier to improvement or a mechanism to crush self-esteem.

UPDATE: From meetings at the DFE, where nods and winks are given by advisers, I doubt very much that the KS2 deciles will see the light of day. Protestors, stand down. But whatever we do get, let’s not hope to avoid the inevitability that some students will always do much better and worse than others. There will be a bell curve. The only question is how how this is presented and how obvious it is to parents where their children sit along the curve.

Reblogged this on The Echo Chamber.

LikeLike

An excellent blog, and an excellent explanation of something that everyone should already know (especially anyone involved in education or politics), but I guess they don’t. It is so good I actually printed it out (the first time I have done that with a blog),

Interesting to see the lack of correlation between achievement in the selection test and outcome at GCSE. That means, of course, that there are students who didn’t rank high enough to get into KEGS who would, though, have done better than some of those that did make it…(?).

LikeLike

Thanks for the comment. We’re very aware of the fuzzy nature of our boundary for selection; it comes down to one or two points on a standardised score. However, the absolute values graph looks different – the spread of 11+ scores is bunched at the lower end while the GCSE points scores are bunched at the higher end – because A* is a cap on their attainment. Also these students are getting nearly 700 GCSE points on average – where A* = 58; not many of their counterparts of similar starting point aged 11 get close to that. I’ve written about the selection rationale here: http://headguruteacher.com/2013/06/09/thoughts-on-school-admissions/

LikeLike

Really good post. I like the reference to piano grades – it fits with my philosophy of ‘assessment when ready’ using tests that are designed to really explore the level you are trying to acquire in some depth (unlike, for example, a KS2 test aimed at categorising pupils from Level 3 to 5, or a GCSE paper spanning many grades). It reminds me of the ‘Single Level Test’ concept that was being trialled a few years back (Key Stages 2 and 3) which, in my view, was a sensible approach. Then it was suddenly dropped before the trial was complete for what appeared to be political and ideological reasons.

I’m not sure I entirely agree with your final point though – that it is no different telling a pupil that they are 6c or 3b than it is telling them in which decile they are ranked. *In theory* the levels should actually *mean something* in terms of a set of skills that have been acquired. And if the DfE’s goal is that every pupil should leave primary school with at least a level 4b, then that ought to be actually achievable, provided that the level retains its meaning in terms of the skills that must be acquired. (This might be more of an argument for teacher assessment in favour of tests, given some of the issues around test standardisation that you have illustrated.) The decile band is, in my view, not a helpful piece of information to give a child. “At this moment in time, based on your performance on this day, this is how you compare to everyone else.” By the time you receive the information, it’s probably already wrong (if indeed it was ever right).

So your reference to Carol Dweck and the Growth Mindset is extremely encouraging and, I agree, absolutely essential. If only our politicians could understand the negative effects of stigmatising pupils with labels, it might make the job of the teacher – to inspire, motivate and instil a passion for learning – somewhat easier. Glad to hear that the growth mindset philosophy is alive and well at KEGS – it sounds to me like there have been some considerable changes since I was there!

Ben Fuller (KEGS alumnus 1984-91)

Assessment Adviser – Hertfordshire

LikeLike

Hi Ben. Thanks for the comment. I think we need more detail on the KS2 tests but from what has been said already, the decile accompanies the actual score. The decile then contextualises the score. I think that is very similar to the levels. My son has just had his KS2 results. It wouldn’t be much use to me (even as a teacher) if I didn’t have info to tell me where his scores sit on a continuum. Levels give an indication of what is required but when a child is say 4A, not 5C, no parent would know exactly what that meant or what it was that they would need to improve on to move up especially when it is just a mark or two on a test. The real difference between one sublevel or the next is getting a couple of questions wrong; it is less fundamental than people think. The test score is a more accurate representation of the assessment process.

I believe in creating a framework for a rounded education – but within that, national assessments have their place and I can see deciles being useful in that context, alongside the raw test scores. This is really just a reconfiguration of how levels work in practice.

Good to see another Ex-KEGS student in the profession!

LikeLike

But, as a parent, did you actually receive your son’s levels in terms of (e.g.) 4a or 5c? The standard template shows only whole levels – and I think it needs to be accompanied by some explanation of what the age-related expectation is. If you were told sub-levels too, well yes that would require much explanation, and there is the danger that many parents would read more into it than the reliabaility of the test really warrants.

I think a key point is the difference between tests and a teacher assessment. It seemed, a few years ago when there was a big push on ‘APP’, that we might be moving towards a system of teacher assessment (based on children’s actual skills and knowledge) becoming a more significant factor in the accountability framework (and indeed we do now have teacher assessment only for writing). I understand your point that, on a test, the difference between a 4a and a 5c is just a few marks, whereas if those sub-levels are based on a teacher’s judgements, drawing from a wide range of classroom learning experiences, I think the difference becomes more meaningful. The problem with any test is the large margin of error. Our approach to accountability seems to be quite content to base everything on a system with far less than 100% validity or reliability, without really questioning that fact much of the time. It concerns me that a child could be told they have attained in, say, the third-from-bottom decile, when the margin of error might span from the median (or above) down to the bottom decile.

I feel that the current proposals are a terrible waste of an opportunity to really radically re-think the ways we assess our young people and the consequences those assessment can have on them. I really don’t think this is what the ‘Expert Panel’ had in mind when they raised the issue of the problems with levels.

LikeLike

Hi Ben

Yes, we did get the sublevels – I think that is fairly standard. Primary teachers talk in sub-levels a lot and we’ve had those reported to us every year. I know this is true of most other parents I speak to.

We all need to understand that 100% validity is only achievable if we design tests that are only right and wrong. Any test that requires a judgement to be made can’t be perfectly reliable or reproducible. The decile system only works as an indicator of the relative meaning of the score. ie 54 from 100 is a 3rd decile score – on that test. Obviously if the deciles are very narrow bands then you are correct about the error margin. We’d need to know the band widths too…

I’ve never set out to validate deciles as positive move per se; I’m just pointing out that the current system is exactly the same to all intents and purpose and we are deluded if we think any test does or can be based entirely on absolute standards. That can never happen.

Levels don’t work because they suggest they are something they are not; deciles are more raw perhaps but at least they are more transparent. I agree that a wider review is needed.

:))

LikeLike

[…] are ultimately defined by comparison as I suggest in my most recent post on this subect: Assessment, Standards and the Bell Curve. Basically, if you don’t want to compare students, then don’t ever give them the same […]

LikeLike

[…] are ultimately defined by comparison as I suggest in my most recent post on this subect: Assessment, Standards and the Bell Curve. Basically, if you don’t want to compare students, then don’t ever give them the same test. […]

LikeLike

I’ll start with a different angle: I LOATHE the cruelty I perceive in the words “Secondary readiness” because I expect we will have some children going to secondary school who, one way or another, will have been told about that verdict and believe they’re not fit for their school. I think there’s a world of difference in their self-ranking based on a low score/level e.g. “I’m rubbish at Numeracy” to what might easily be internalised as “I don’t belong here”.

Bell curve #1: Not sure what we’ll get next year, but I played for a little longer when converting my end-Y5 child’s *sub-levels* into rough percentages (as you do having wearily plodded through the cut & paste criteria and figured out their approximate in-class ranking) and the results distributions don’t look too pretty i.e. you can see a lot of distortion courtesy of the floor-levels. I thought we’d been promised some relief from perverse incentives, but although two-thirds of a level might represent a year’s learning we have that insistence on 4c-4a equivalence you’ve noted above, and that follows much the same “logic” for 5c-5a in and around Ofsted’s ‘most able’ report.

Bell curve #2: Every child.. full potential… glass ceilings.. stretch the brightest i.e. do something about that truncation at the extreme right of the results graphs, but they want 85% in the space for GCSE C-A*, have promised one-size-fits-all-abilities (including L6) assessment at the end KS2 and rarely a month goes by without some political gap-narrowing rhetoric. I’m glad I don’t have to tick all those boxes.

11+ rank vs. GCSE points: Doesn’t look like that out here of course e.g. the closest I can find in bog-standard land are the RAISEOnline transition matrices where KS2 sub-level appears to be a fairly good indicator of specific GCSE grades in English and Maths. That said I don’t know how much of that is unconstrained progress or the moderating effect of the system.

Finally, two of my daughter’s three targets for Y6 next year are essentially about exam technique but given some near-perfect recent exam performances those targets are absurd. It’s already depressing to see state assessment trumping learning at this age, but I expect it will get worse when schools are in the thick of new-improved assessment/accountability.

LikeLike

Thanks for your thoughts. The scatter diagram is included to illustrate that, within a particular ability band, rank order is highly variable. Across the whole ability range, as you suggest, the bell curve looks more like pre-determined outcomes. I share your concern about the design of the KS2 test..what will it look like? We’re putting far too much pressure on a process that can only deliver a very specific form of outcome.

The progressive grades we’ve proposed at Heads’ Roundtable would solve some of these things. But I do worry that some people almost literally don’t think it is fair for some children to do better than others…in any assessment regime. Also some people insist on a value added measure for Primary schools but simultaneously baulk at the idea of baseline assessments in Early Years. Can’t have it both ways.

LikeLike

In response to a “What if…?” question my last piano exam pep-talk included: “I’m absolutely certain you’re grade [n] and if you accidentally glitch today it doesn’t matter because there’s *nothing* to stop you starting grade [n+1] material tomorrow. You could take grade [n] again during the next round of exams in a few months time, but only if you want to add that certificate to your collection.”

Progressive exams seem quite compelling, but much depends on the ‘operational framework’ for them in the real-world. Relations with seriously pushy parents might also become more ‘interesting’ given that there are plenty of horror stories around demands re. children’s progress towards grade 8 piano i.e. I’d expect quite a few pantomime quarrels over “Ready”.

I’m wary of Reception year baselines because my child is a summer-born and she was treated to some of the adult perception problems. What will they measure and how effectively for that tricky age *range*?

LikeLike

Hi Tom

Great article, as always.

It would seem that the very low number of Level 6 for Reading at Y6 doesn’t fit with the bell-curve theory: is that right and, if so, what is the (your) explanation for it? Or am I just misunderstanding?!

Cheers

Chris

LikeLike

On another point, I’m a little surprised that you have had sub-levels reported. Whilst writing (other than SPaG) is Teacher Assessment anyway, I can only think that you’ve been given the TA for Reading & Maths (which may be different from the test) or Target Tracker’s interpretation of the raw scores?

LikeLike

Thanks Chris. We definitely has sublevels reported. It is a school generated report. 6B for SPAG, 5A for reading; 6B for Maths – all for tests with TAs reported alongside. It may well be the school’s interpretation but we’re presented it as if that is the definitive outcome. My feeling is that if the school can see the sub-levels, parents should see them too, even if they’re not absolutely solid.

LikeLike

[…] How do we stop this? It strikes me that we need to do a lot more work on establishing what standards mean, without using a proxy code; we should be doing it by looking at the actual work. Teachers should be discussing this extensively within schools; schools should be sharing this information extensively between them. Forget levels; good riddance to them – they were only ever a bell-curve marker anyway. Read this if you still think levels define absolute standards – they don’t. […]

LikeLike

One thing this ignores is that outside the academic school system assessment can be for a purpose different to grading across a bell curve. If I’m an employer with a job that needs a particular skill, my primary interest is in whether the person has that skill. In terms of the high jump analogy if the task requires jumping consistently 1.5m there might well be no real benefit in jumping 1.9m. If the candidate can manage 1.5m consistently I might well be interested in other factors to decide who gets the job. How expensive are they to employ? Are they likely to turn up on time? Will they contribute to staff morale etc. Assessing competence at a vocational level is similar to sorting out the L4 a, b, c grades but it generally does not need the degree of precision. This makes it a lot less expensive to administer in staff time and gets round the difficulty that there is never an associated uncertainty published with the measurement which makes it much more difficult to be sure of the validity of the results. Now in a school setting there are circumstances where competence based assessment has a place but I’m not saying it should be the only type of assessment, far from it. If we use competence based assessment as a baseline, we can set an exam to provide grading from that baseline. Don’t let anyone take the grading exam until they have demonstrated the required competences. This then makes it less likely that anyone will be entered for an exam they are destined to fail. Grade the exam questions to spread out performance. This also provides opportunities for assessment for learning, personal target setting and peer review but without the overhead of having to grade levels with a precision that will inevitably have a significant administrative overhead . We want teachers teaching not spending hours deciding grades to a precision that is probably not justified in any case. Furthermore we can use cloud based management systems to remove any need for paper at all although there is nothing to stop using paper if it is more appropriate.

The snag with exam only approaches is that exams on their own can just as easily demotivate as motivate. Making exams harder will not make kids cleverer unless they are motivated by them. If you do well at exams you are likely to be motivated by them but the further that pass seems out of reach the more likely it is that you are just not going to bother wasting the time and energy. After all ask yourself if asking someone weighing 70kg and 1m tall to jump 2m will motivate them more than if the height is set at 1.5m? So the dilemma is the further towards the right hand end of the bell curve we make the pass mark the fewer people it is going to motivate. If we really want to raise standards overall, motivation is what is needed to do it. So is there a solution? Certainly there is not a perfect solution but probably there is a better one than simply relying on a terminal examination.

Currently over 20% of the cohort get A*/A grades in GCSEs. How many of them could have managed this a year earlier? Let’s say it was half, that is 10%, probably 50,000 of the most able kids marking time for a year. This is the nonsense of discouraging early entry and at the same time restricting attempts to one in a high stakes exam system. The road to hell is paved with good intentions. All the attention is on plotting the Bell Curve and none on putting attainment along the X axis and shifting it to the right.

LikeLike

[…] they need to in order have any sense of what standards mean. I’ve explained this in my Bell-Curve post. It isn’t legitimate to suggest that grade boundaries, year to year, have been politically […]

LikeLike

[…] all know that grade boundaries are created by a statistical overview of standards (all standards are derived from the bell curve, like it or not.) But we’re not as up-front as we should be about the blurred edges. It feels right to use […]

LikeLike

[…] Also, Assessment, Standards and the Bell Curve from Tom Sherrington […]

LikeLike

[…] Assessment, Standards and The Bell-Curve. […]

LikeLike

[…] writes about – what I perceive to be his forte – Assessment, Standards and the Bell Curve and how data is presented and how obvious it is to parents where their children sit along the […]

LikeLike

[…] Assessment, Standards and the Bell Curve. […]

LikeLike

[…] Assessment, Standards and the Bell Curve – Tom Sherrington […]

LikeLike

[…] So far, we’ve been seeking a focus on authentic assessment within subjects, breaking free from all the illusions and falsehoods of NC levels; the ladder of progress that never was. This has generated lots of great discussion in departments about standards and how we assess them. This has included reflections on the problem with ‘can do’ statements and the absolute importance of internal and external moderation of standards based around samples of work. We’ve embraced the idea that standards are relative, not absolute, in most contexts. (See Assessment, Standards and the Bell-Curve). […]

LikeLike

[…] Source: Assessment, Standards and the Bell Curve […]

LikeLike

[…] work on his assessment system using the bell curve comes to the fore. Tom first blogged about assessment, standards and the bell curve in 2013 and has since gone on to use the model in the KS3 assessment system developed at Highbury […]

LikeLike

Why should that be acceptable , ‘that some students will always do better than others’ it would be far better to focus on individuals achieving their own goals . Class sizes would have to be hugely reduced of course .

LikeLike

[…] on using based around work sampling. It’s definitely worth looking at see Tom Sherrington’s Assessment, Standards and the Bell-Curve […]

LikeLike

[…] https://headguruteacher.com/2013/07/17/assessment-standards-and-the-bell-curve/ […]

LikeLike

[…] Also, Assessment, Standards and the Bell Curve from Tom Sherrington […]

LikeLike

[…] Assessment, Standards and the Bell Curve […]

LikeLike

[…] this post: Assessment, standards and the bell curve if you want to explore the notion that standards are ever absolute. They’re […]

LikeLike

[…] you should read Tom Sherrigton’s blog on assessment standards and the bell curve – same idea, I think, but a different […]

LikeLike

[…] I’ve explored in various posts – including Assessment, Standards and The Bell Curve, there are very few educational measures that are truly absolute: we almost always need to refer to […]

LikeLike

Really interesting piece. Loved reading it. I’m not sure we should be calling people at the lower end of the bell curve as poor performers. Some of those students may have performed really well to get those grades. But really enjoyed reading it. Thank you.

LikeLiked by 1 person

[…] Assessment, Standards and the Bell Curve […]

LikeLike

[…] require standards to be set; standards require thresholds; thresholds reference a bell-curve. (See here if you don’t understand this – I do really get tired of people who argue against norm referencing as if it’s a […]

LikeLike

[…] Reference https://teacherhead.com/2013/07/17/assessment-standards-and-the-bell-curve/ […]

LikeLike